Google Gemini’s New Update Blurs Line Between Private Photos and AI Images

Apr 23, 2026

Share:

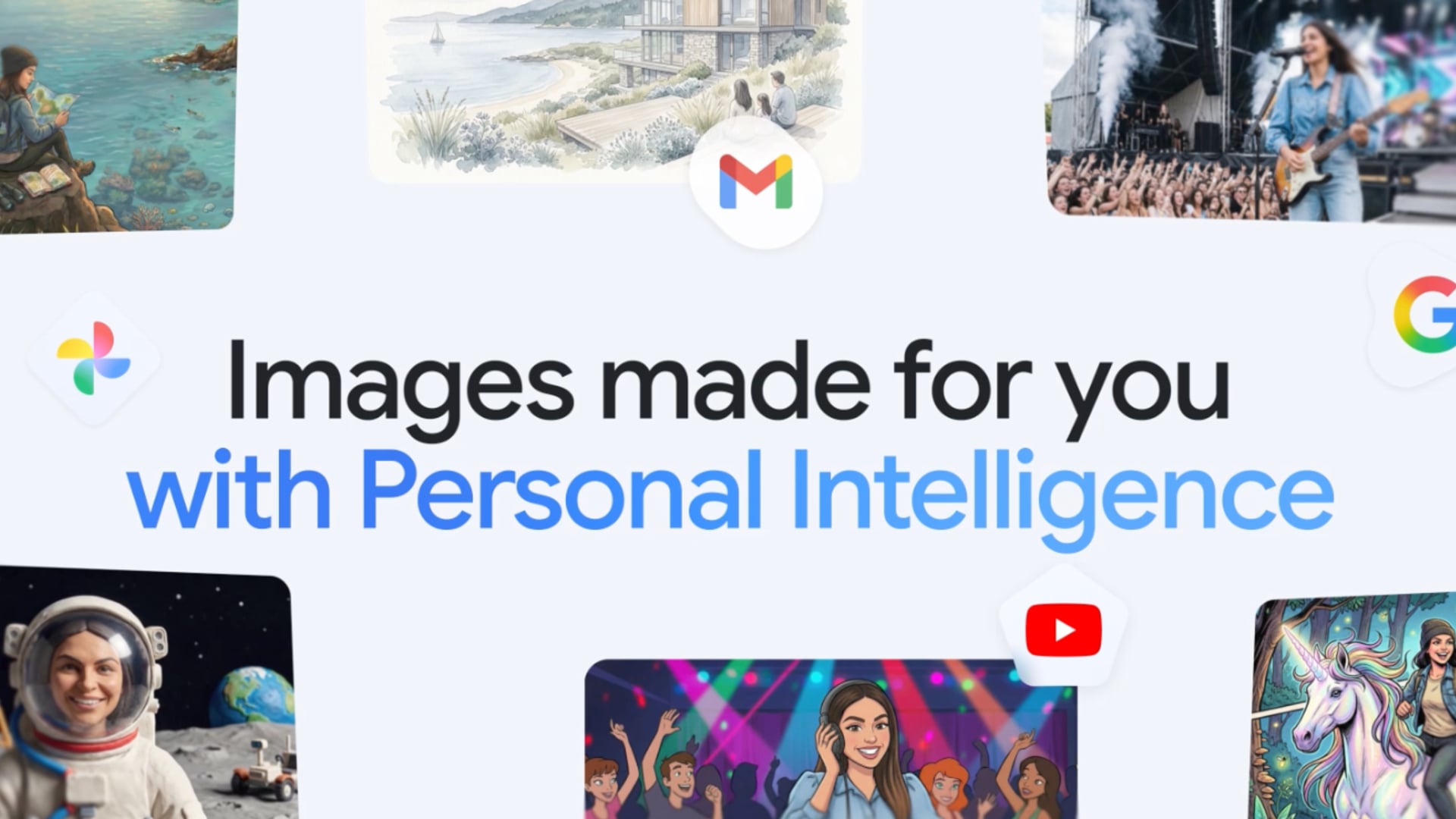

Google is tightening the link between personal data and AI image generation, and the latest Gemini update introduces a direct connection to Google Photos.

The feature brings AI image generation from Google Photos into the Gemini app, allowing users to create visuals that draw on stored images, preferences, and labeled people or pets. It is part of a wider push around what Google calls Personal Intelligence, which aims to make AI output feel more context aware and tailored to individual users.

Google Gemini And Personal Photo Data

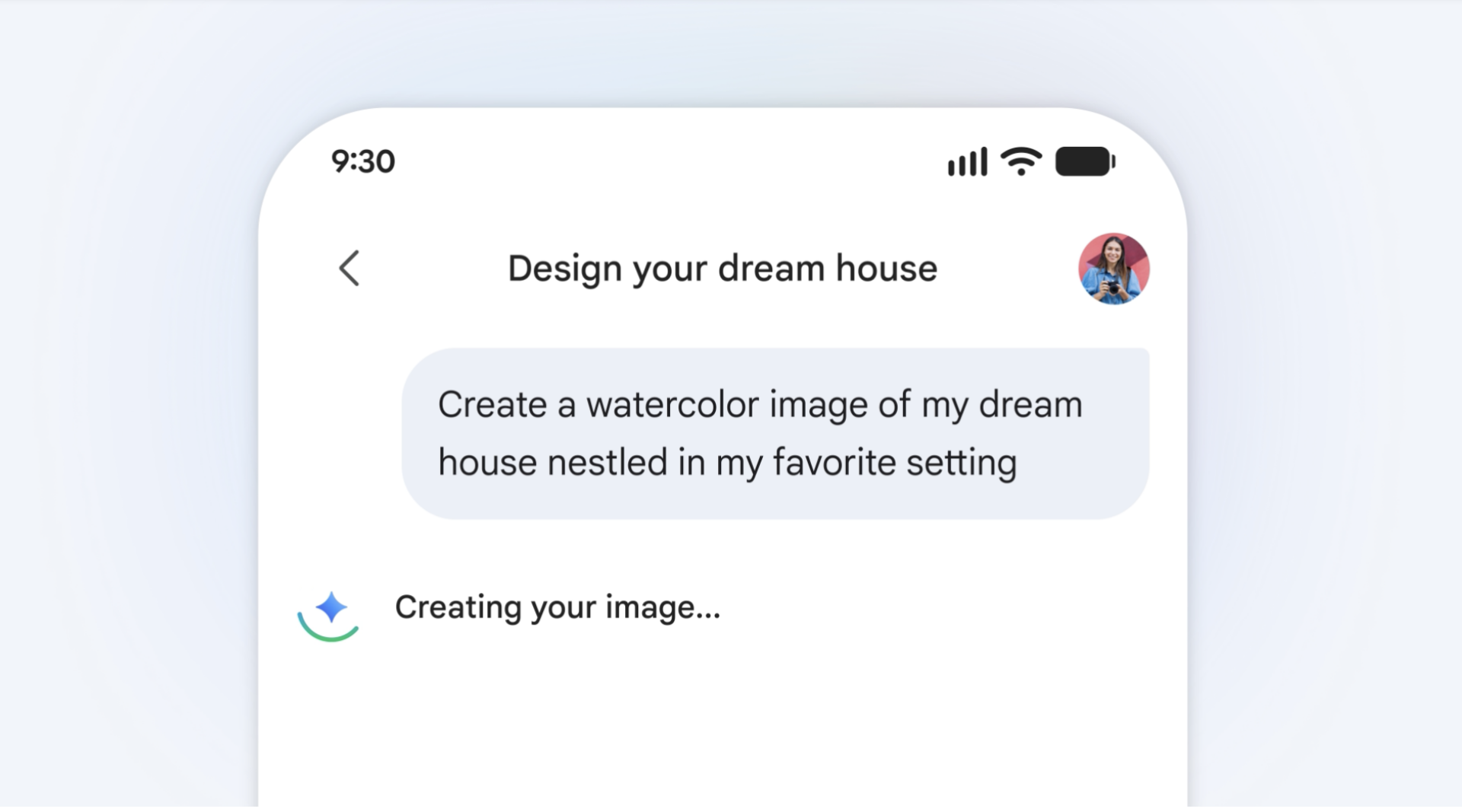

At the center of this update is the integration of Gemini with Nano Banana 2 and Google Photos. Instead of relying on detailed text prompts or manual image uploads, the system uses existing photo libraries and user data to fill in context automatically. Google describes this as removing the need for long prompts by letting the model infer preferences and visual details from connected apps.

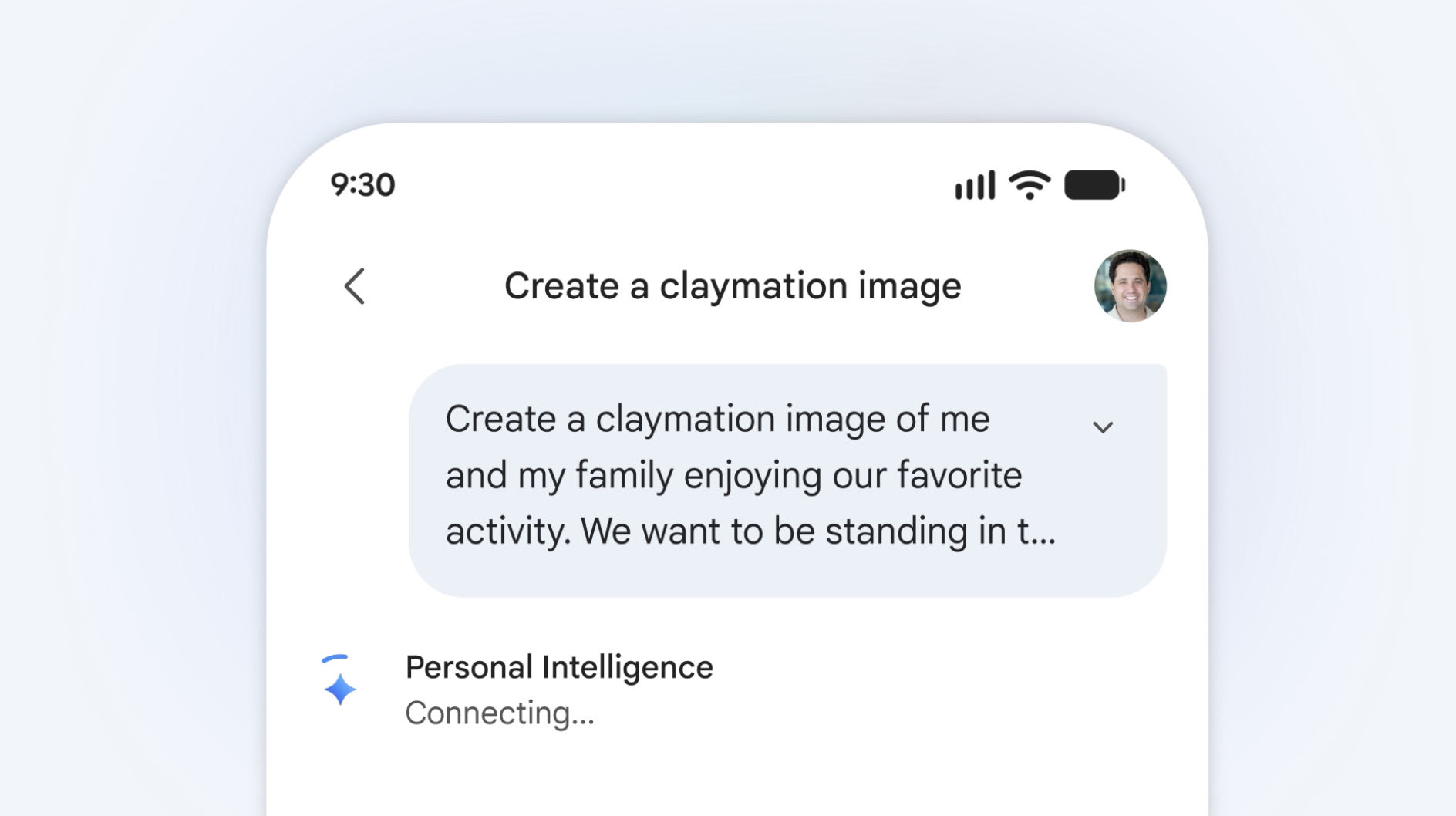

In practice, this means users can issue short prompts like “create my dream house” or “show me a sketch of my family on vacation,” and the system will attempt to generate images based on stored visual references and metadata.

Labeled faces and groups in Google Photos can also be used to place real people and pets into generated scenes, expanding how personal archives are reused inside AI workflows.

Google also introduces refinement tools. If the generated image does not match expectations, users can adjust prompts, swap reference photos, or ask for corrections. A sources function shows which images were used as references for a given output.

This adds a layer of transparency, although it still depends on automated selection of personal content.

The rollout is currently limited to subscribers in the United States across Google AI Plus, Pro, and Ultra plans, with expansion planned for desktop Chrome and additional regions.

Growing Use Of Personal Data In AI Tools

This update reflects a broader industry trend where AI systems are increasingly tied to personal data ecosystems. Similar approaches are being tested across major platforms, where models use email, photos, and browsing history to generate more context aware outputs. T

he goal is to reduce friction in prompting, but it also increases the amount of private material feeding into generative systems.

For creators and photographers, this raises practical implications. Personal image libraries often include client work, behind the scenes material, and private content that was never intended for reuse in synthetic outputs. Even when systems are opt in, the boundary between stored media and generated media becomes less visible.

Risks And Questions Around AI Image Use

The use of personal photos in AI generation introduces several risks that are still being debated. One concern is unintended reuse of private images in generated content. Even with safeguards, automated selection of reference material can lead to unexpected combinations of personal data.

Another issue is consent. Labeled faces in Google Photos often include other individuals, which means their images could be used in generated scenes without direct participation in the AI process. This raises questions around ownership and permission when personal archives are used as training context rather than direct training data.

There is also the question of output control. Once personal images are embedded into AI generation systems, it becomes harder to fully predict how they will appear in new contexts. Even with refinement tools, the system still decides how context is interpreted.

Google states that the Gemini app does not directly train its models on private Google Photos libraries, instead using limited prompt and response data to improve performance.

However, the integration of personal archives into generation workflows continues to blur the line between private storage and creative input.

As AI image tools become more personalized, the balance between convenience and control becomes a key issue. For users working with sensitive or professional photography archives, the question is not only what the tools can create, but also how much of their visual history is being quietly repurposed in the process.

Alysa Gavilan

Alysa Gavilan has spent years exploring photography through photojournalism and street scenes. She enjoys working with both film and mirrorless cameras, and her fascination with the craft has grown over the decades. Inspired by Vivian Maier, she is drawn to capturing everyday moments that often go unnoticed.

Join the Discussion

DIYP Comment Policy

Be nice, be on-topic, no personal information or flames.