Google Gemini Adds Hidden AI Watermark Detection to Expose Fake Images

Nov 26, 2025

Share:

AI image verification is becoming more important than ever in our digital age, and Google’s new integration with the Gemini app shows just how seriously the company is taking the problem.

With generative media exploding across the web, it is no longer enough simply to question whether something “looks real.” By embedding a hidden signal in AI‑created or AI‑edited images, Google wants to help you know at a glance what you are looking at, and to do that, it is rolling out its SynthID watermark detection right into Gemini.

How It Works

Google’s verification system relies on its own SynthID technology, which hides a digital watermark inside images that are either entirely generated by Google AI or significantly edited by it. The signal isn’t visible to the human eye but it can be detected by the right tools. In this case, the detector lives in your Gemini app.

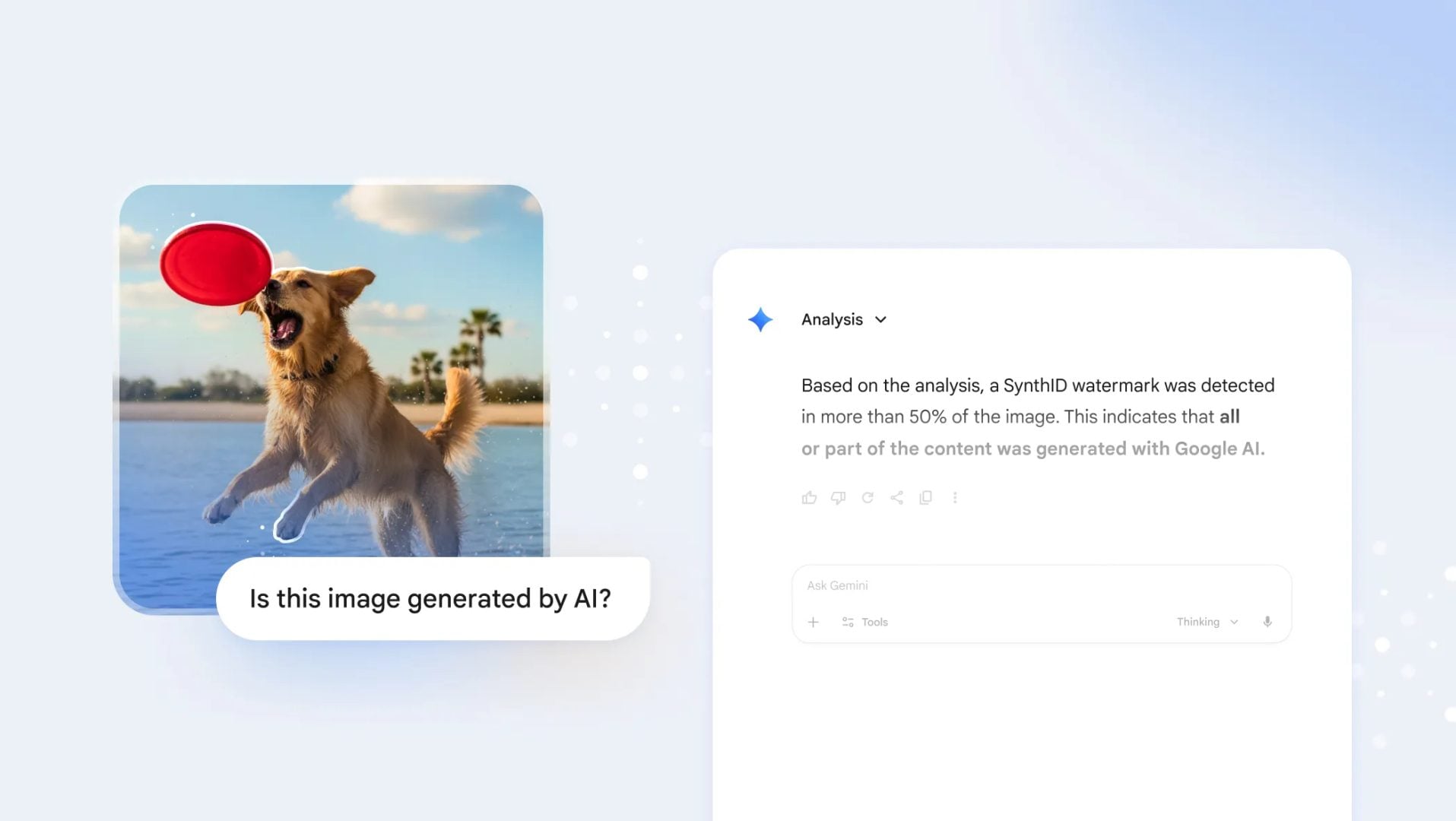

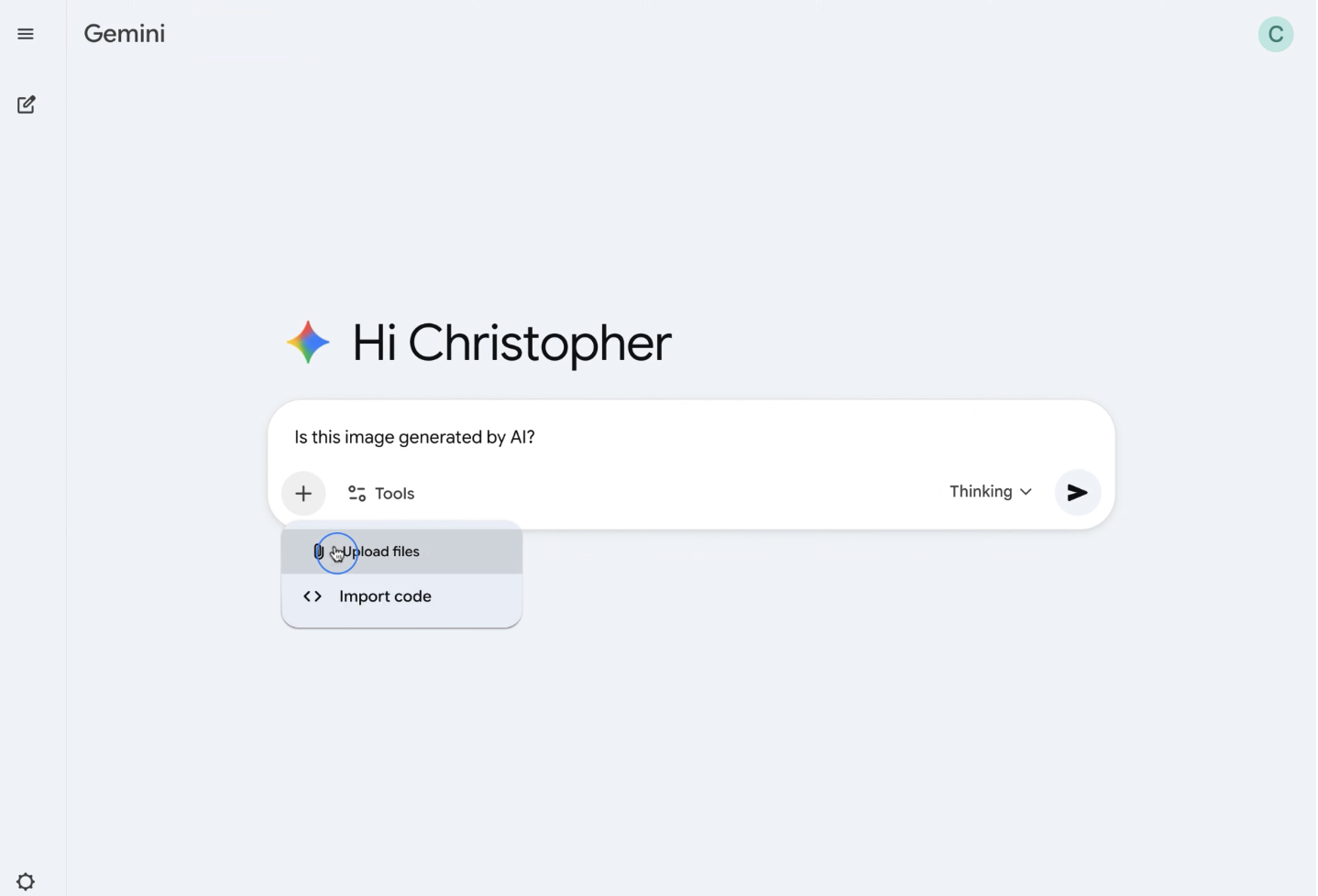

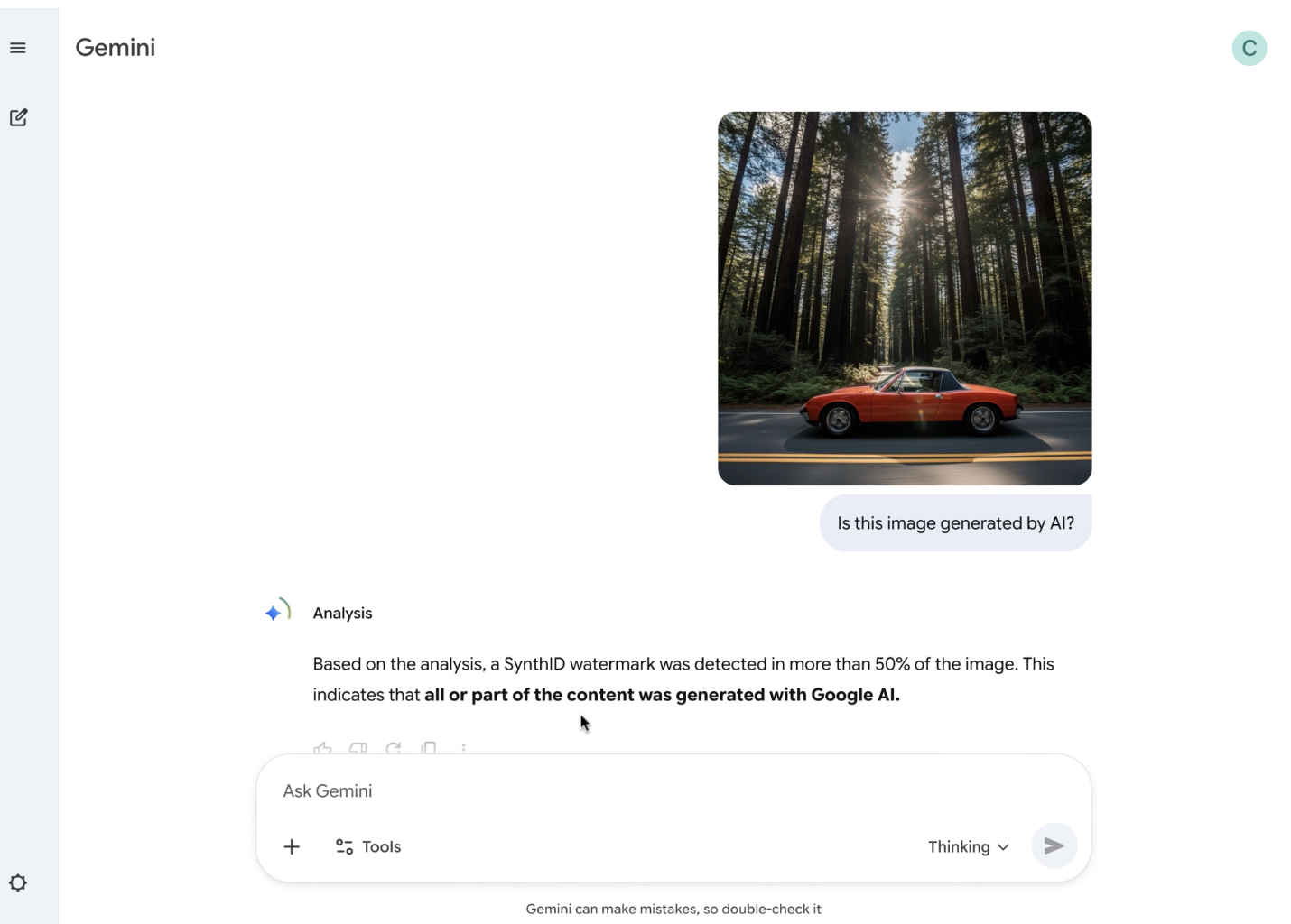

To verify an image, you simply upload it into Gemini and ask a question like “Was this created with Google AI?” or “Is this AI generated?” Gemini will then inspect the image for the embedded watermark.

If SynthID is detected, the app will respond with contextual information about how the image likely originated or was manipulated. The response doesn’t just say yes or no. Gemini actually applies its own reasoning on top of the watermark to help you understand what the signal means.

This system builds on work Google first launched in 2023, and it follows earlier experiments with detection portals used by journalists and media professionals. According to Google, more than 20 billion synthetic pieces of content have already been watermarked using SynthID, which is a reasonably high volume that underscores how widely the technology has been used.

Why This Move Matters

The spread of high‑fidelity generative media has made it more difficult to distinguish real photographs from synthetic ones. That matters not only for casual viewers, but also for people who rely on images in news reporting, research, social media, and more. By making verification tools accessible to everyone through Gemini, Google is giving you a way to confidently interrogate what you see.

This feature also advances transparency. For people working in content creation and journalism, image provenance is a key concern. Knowing whether something was generated by an AI adds credibility to your decisions and helps you avoid unintentionally amplifying manipulated or synthetic media. Over time, these verification tools can become part of standard digital literacy and actually help more people understand how modern images are made.

Google is also looking ahead. It plans to expand SynthID verification into other formats, including video and audio, and to bring this functionality into more of its own products like Search. That means that in the near future, you could check if a video or sound clip came from a generative model just like you can check an image now.

Google is also building out support for C2PA, a multimedia standard designed to help track the origin and authenticity of digital content. For Nano Banana Pro–generated images from Gemini, Vertex AI or Google Ads, Google will embed C2PA metadata.

Eventually, you will be able to verify not only that an image was AI‑generated, but also which model or service made it and whether it was edited after that. Google also expects to extend this to credentials for content created outside of its own products.

What This Means for Photographers and Image Creators

If you are a photographer, designer or content creator, this unveiling has several impacts. For one, you may begin to see your own work treated differently. For example, if an image lacks SynthID or C2PA credentials, some people may question its origin. That could influence how images are shared or licensed.

On the other hand, if you experiment with generative tools like Google’s own Nano Banana Pro or other models, the presence of a watermark or credential can help you establish provenance for your AI-created work. It might also boost trust from clients, audiences or publications suspicious of synthetic content.

Finally, because verification will expand to more formats, you might use similar tools in your own workflow. For instance, a filmmaker could verify audio or video for authenticity during editing or distribution. This kind of transparency can push the industry toward higher standards around synthetic content.

Google’s move to integrate image verification directly into Gemini is part of a broader push for responsible AI. By giving users a built-in way to check image authenticity and embedding invisible signals in the content, Google is working to create a more transparent media environment.

Alysa Gavilan

Alysa Gavilan has spent years exploring photography through photojournalism and street scenes. She enjoys working with both film and mirrorless cameras, and her fascination with the craft has grown over the decades. Inspired by Vivian Maier, she is drawn to capturing everyday moments that often go unnoticed.

Join the Discussion

DIYP Comment Policy

Be nice, be on-topic, no personal information or flames.

One response to “Google Gemini Adds Hidden AI Watermark Detection to Expose Fake Images”

Note: If you ask Gemini it will only detect Google AI-created illustrations. Other AI’s like ChatGPT will not detect the SynthID