This AI tool fills in the gaps in any image, even portraits

Jul 17, 2019

Share:

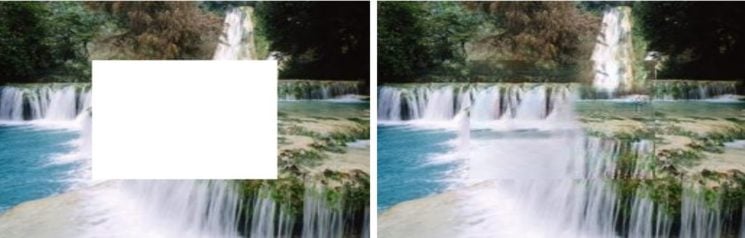

From relighting images to removing backgrounds, the applications of AI tools in photography are many. The new AI-powered tool introduced by Chinese scientists can accurately fill in the blank spaces in all kinds of photos. Be it a front of a building, a landscape photo, even a portrait – the AI is trained to fill in the gap surprisingly accurately.

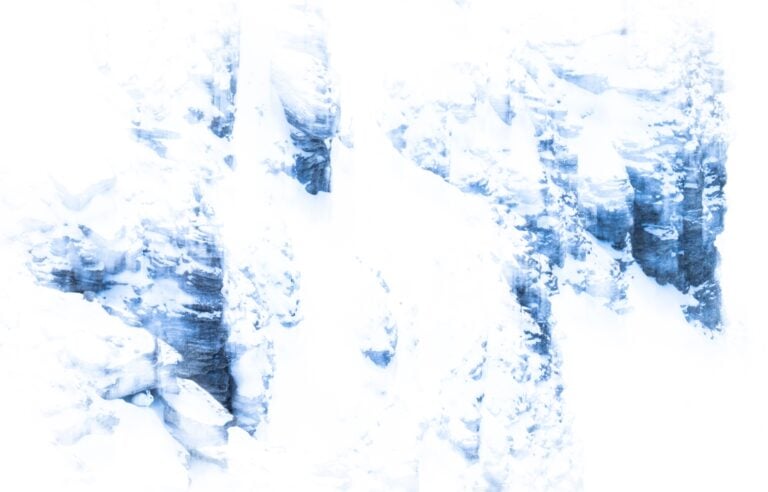

The researchers have named the tool PEN-Net (Pyramid-context ENcoder Network). It was developed as a collaborative project between Sun Yat-sen University in Guangzhou and Beijing’s Microsoft Research lab in China. The technique is called inpainting and uses deep learning technology to fill in the blanks in two possible ways. It either copies the existing patches on the missing of the photo or generates completely new areas in those missing parts. For example, in the photos of faces, the tool has to recreate the missing part of the image entirely from scratch. The results are pretty realistic, they are achieved fast, and the scientists note that the tool is also very quick to learn. Here are a couple of examples:

According to the researchers, this isn’t the first time inpainting has been introduced. However, it gives more accurate results than the previously developed methods. Lead author of the project, Yanhong Zeng, told Digital Trends that their model preserves both structure and texture coherence in inpainting results. “We are excited to see that our model is capable of generating clearer textures and more reasonable structures than previous works,” he added.

The PEN-Net could have many applications, and as you can imagine, they would mainly be focused on image editing. Zeng confirmed this to Digital Trends, saying that the team will work on applying the technology to image editing programs. It could particularly be useful for object removal and old photo restoration, and the latter was my first thought. Along with AI-powered colorization tools, we could get helpful apps that would help with bringing old and damaged photos to life.

If you’d like to read the full paper about PEN-Net, it’s available on Arxiv.

[via Digital Trends; image credits: Sun Yat-sen University, Microsoft Research lab Beijing]

Dunja Đuđić

Dunja Djudjic is a multi-talented artist based in Novi Sad, Serbia. With 15 years of experience as a photographer, she specializes in capturing the beauty of nature, travel, concerts, and fine art. In addition to her photography, Dunja also expresses her creativity through writing, embroidery, and jewelry making.

Join the Discussion

DIYP Comment Policy

Be nice, be on-topic, no personal information or flames.

6 responses to “This AI tool fills in the gaps in any image, even portraits”

Would have been handy for my thesis!

Other than (poorly) recreating the face, it’s just the Clone tool

A bit better cause you don’t need any original face. it will create the missing part immediately from its own database.

Could be interesting tools when taking picture of large protesters crowd.

Remove all the people face, le the AI recreate at will

and publish it!

could be useful, to “fill in the blanks” when it comes to pictures of interference patterns, reconstructing the orbitals of the particles, like qubits, under observation, thus inferring the entire wavefunction of the qubit

Why aren’t these AI available as tools online?