Are your social media photos ending up in a law enforcement database?

Feb 19, 2020

Allen Murabayashi

Share:

Facial recognition is an incredibly useful consumer tool for organizing our burgeoning photo albums. Companies like Google and Apple have slowly integrated machine learning algorithms into their consumer photo products, which allow you to search by keywords without the need for manual tagging, or to simply click on a face to see more photos of that person.

Programmers have been gradually improving facial recognition algorithms, but they are not without problems and critics. And while today’s best algorithms boast accuracy rates in the high 90th percentile – approaching or outperforming humans – they still struggle with people of color and/or have enough of an error margin to raise serious concerns. In California, a test to compare the faces of state legislators against a mugshot database using Amazon Rekognition software led to 26 false positives. The error rate led to a 3-year moratorium on the use of facial recognition software in police body cams.

In this week’s episode of PhotoShelter’s photography and culture podcast, Vision Slightly Blurred, Sarah Jacobs (@sarahjake) and Allen Murabayashi (@allen3m) discuss the controversy surrounding Clearview AI and the implications for facial recognition going forward.

While most consumers can recognize the obvious benefit for their personal repository of photos, the privacy implications at scale are highly concerning when third parties start to aggregate images and make the data commercially available.

The New York Times recently reported on Clearview AI, a shadowy start-up which combined its photo recognition app with billions of publicly available photos that it scraped from sites like Facebook, Instagram, Twitter, YouTube and Venmo. Clearview has been selling the resulting dataset along with a custom app to law enforcement organizations with no governmental oversight. Take or upload a photo of a person, and Clearview AI returns all the matching photos.

In multiple interviews, the firm’s 31-year old founder, Hoan Ton-That, has shown a limited understanding and concern for ethical and moral issues arising from the use of his technology, and has been unable to resolve contradictory statements between him and his investors.

How does facial recognition work?

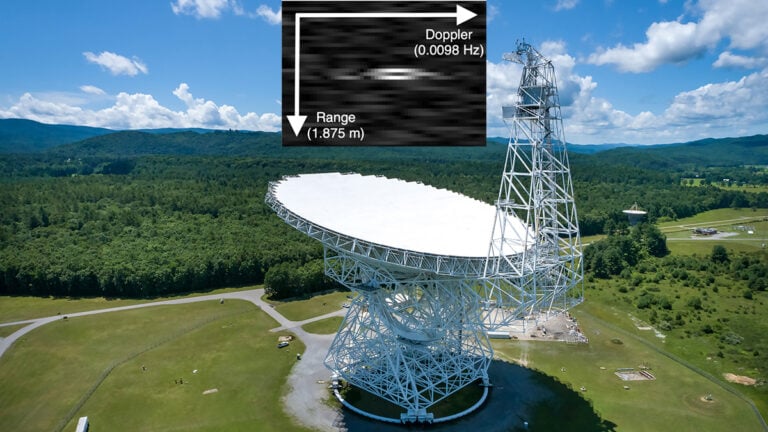

A number of underlying algorithms power most of the current facial recognition systems, but generally speaking, they try to identify the geometric position of facial features while correcting for lighting conditions, head tilt, etc. More sophisticated systems that use 3D depth information (e.g. your iPhone) are lighting independent, and in theory, provide higher accuracy. Deep learning-based systems have made facial recognition much faster and viable.

Facial recognition was initially only accurate with near frontal images. Researchers often benchmark facial recognition software against the Labeled Faces in the Wild (LFW) dataset – a publicly available collection of images used for face verification that comes with numerous warnings about its limitations (e.g. poor representation of minorities, the eldery, etc). In 2014, Facebook’s DeepFace algorithm achieved 97.35% accuracy against LFW.

In recent years, researchers have tried to enhance recognition with faces turned away from the camera. Using the People in Photo Albums (PIPA) database extracted from publicly available Flickr images, researchers like Facebook’s Yaniv Taigman have significantly improved recognition non-frontal images meaning people walking in the background have a higher likelihood of being identified.

Facial recognition is distinct from facial detection, which is the technology found in many cameras to aid focus, or in auto-tagging to identify the existence of people within a photo. Sony’s flagship cameras, for example, have very powerful face and eye detection and tracking capabilities that make them more accurate and useful than zone-based focusing systems.

Most consumer services employing facial recognition (e.g. Apple Photos, Google Photos, etc) require your images to be processed in the cloud. A few, like Lightroom Classic CC, perform facial recognition on the desktop – a processor intensive operation that can take hours or days to churn through a large number of photos.

Clearview AI Has Your Photos

Clearview scoured social media sites and downloaded publicly available images without the consent of the hosts. A number of tech giants have sent cease and desist letters to Clearview AI, claiming that the scaping of photos violated their terms of use. Ton-That has dubiously claimed a First Amendment right to the data, and stated that what he does is no different than how Google crawls and indexes websites.

In its defense, Google pointed out that a webmaster can opt-out of having their website included in the Google search engine. But whatever the case, there are few, if any laws, to protect consumer privacy and prevent Ton-That from collecting images.

Other nation states have and use similar technologies

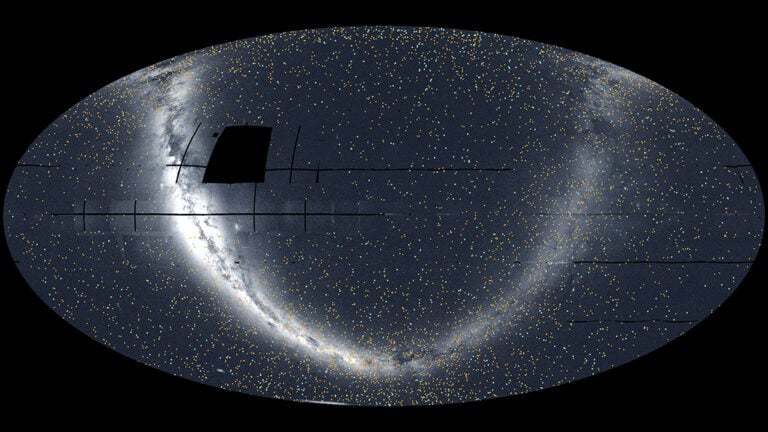

From a global perspective, Clearview AI’s technology isn’t unique. China and other authoritarian states already use facial recognition software to monitor citizens from suspected criminals to political protesters to ethnic minorities.

And while an estimated 50% of Americans’ images already reside in law enforcement databases, Clearview’s aggregation of social media photos into a single database without oversight make it unique in the US.

The technology is finding criminals

Amidst a number of spectacularly false claims regarding the utility of its software, Clearview AI has aided the capture of a number of criminals. The NYT verified the claims in a sales presentation whereby “a person who was accused of sexually abusing a child whose face appeared in the mirror of someone’s else gym photo” was successfully apprehended.

Despite the successes, some in law enforcement have expressed concern about the technology. In New Jersey, Attorney General Gurbir S. Grewal, who oversees the police department, barred officers from using the technology.

“I’m not categorically opposed to using any of these types of tools or technologies that make it easier for us to solve crimes, and to catch child predators or other dangerous criminals,” Grewal said. “But we need to have a full understanding of what is happening here and ensure there are appropriate safeguards.”

The potential for abuse is high

Without appropriate safeguards and rules regarding the use of facial recognition in law enforcement and even in public life, the potential for abuse runs high. Imagine a person on a first date using the software to identify, then stalk another person. Imagine a rogue cop with a vendetta illegally surveilling a suspect, or an ex-spouse.

When applied against a personal collection of photos, facial recognition offers a convenient way to find and organize images. But when the technology is used against aggregated datasets and without consumer privacy protections,

Technology outpaces ethics which outpaces laws

In the US, laws protect the people but shouldn’t be unnecessarily burdensome to commerce. Thus, the enacting of laws logically lags significantly behind the development of new technology. In the absence of more ethically-minded CEOs, however, we do need individuals, communities and organizations to initiate discussions around limits of technology and how we want to balance technology, security and privacy.

Somewhat curiously, laws can sometimes simplify commerce. As former NJ AG Anne Milgram pointed out in The Vergecast, a federal law regarding technology can ease the burden of a start-up trying to comply with a multitude of contradictory state law.

Facial recognition has a place in the modern world, and a blanket acceptance of rejection of the technology misses the nuanced discussions around how to make it work most effectively in society.

Cover photo by Ortynskyi Nazarii/Pond5

About the Author

Allen Murabayashi is a graduate of Yale University, the Chairman and co-founder of PhotoShelter blog, and a co-host of the “I Love Photography” podcast on iTunes. For more of his work, check out his website and follow him on Twitter. This article was also published here and shared with permission.

We love it when our readers get in touch with us to share their stories. This article was contributed to DIYP by a member of our community. If you would like to contribute an article, please contact us here.

Join the Discussion

DIYP Comment Policy

Be nice, be on-topic, no personal information or flames.

One response to “Are your social media photos ending up in a law enforcement database?”

Inquiring About Guest Blog Sponsorship

Hello

I am reaching out to explore advertising options. Specifically I would like to know if you permit the publication of sponsored articles and if so what your terms and pricing might be.

I would greatly appreciate any details you could share. Wishing you a wonderful day