This video explains Google’s Super Resolution algorithm in just three minutes

May 31, 2019

Share:

There are so many new features coming to smartphone cameras these days that it can be difficult to keep up. You’ve got all kinds of HDR, fake bokeh, high resolution and high detail modes that all work in a bunch of different ways. Most of these features are there to overcome limitations in the tiny sensors that smartphones tend to contain.

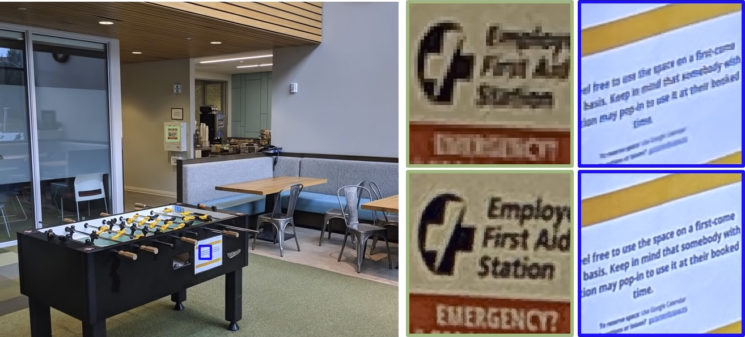

One feature is Google’s Super Resolution algorithm. A computational photography technique that essentially replaces the demosaicing process used on most digital cameras. It’s quite fascinating, looks much better than most of the alternatives, and it’s pretty clever how it works.

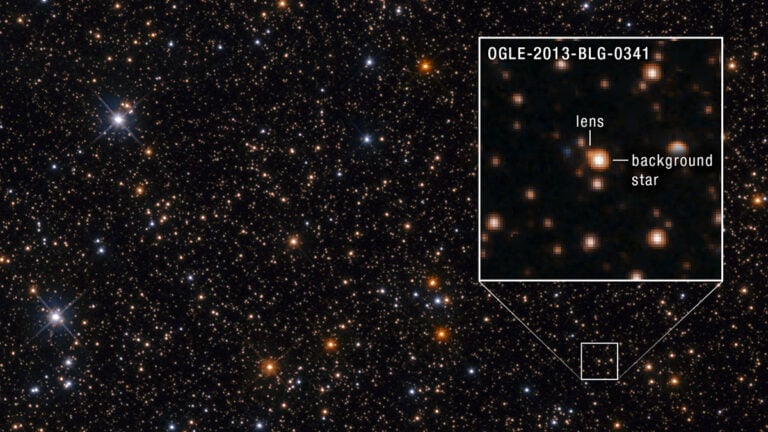

So, to understand why this is such a cool process, one needs to know how Bayer and similar (like Fuji X-Trans) sensors work. Bayer and similar types of sensor are in just about every camera out there except for Sigma Foveon. Each pixel on the sensor is assigned a colour. Each only sees that one colour, either red, green or blue, at a particular level of brightness. This means that the sensor doesn’t see the entire sensor for all colours and results in a lot of missing information. The demosaicing process interpolates this missing data to present you with the final colour image.

Sigma’s Foveon technology actually does see all three colours for every pixel on the sensor, and this is sort of what Google’s attempting to emulate with this technique.

Put simply, Google’s algorithm takes multiple images, using the slight hand movements from the person holding the phone. The slight misalignment of the scene relative to the pixels fills in some that missing data. After aligning all the images, the algorithm is able to pull different pieces of real colour information from real pixel data. Instead of guessing the missing information, it’s able to create three complete (in theory) red, green and blue channels.

We harness natural hand tremor, typical in handheld photography, to acquire a burst of raw frames with small offsets. These frames are then aligned and merged to form a single image with red, green, and blue values at every pixel site. This approach, which includes no explicit demosaicing step, serves to both increase image resolution and boost signal to noise ratio. Our algorithm is robust to challenging scene conditions: local motion, occlusion, or scene changes. It runs at 100 milliseconds per 12-megapixel RAW input burst frame on mass-produced mobile phones.

– Google Research Team

There is no doubt that computational photographer has come on in leaps and bounds over the last few years, and no doubt it will continue to do so in the coming years as smartphone cameras improve and their processors get even faster and more capable.

It’s a very cool technique, and you can read more about Handheld Multi-frame Super-resolution mode here.

[via DPReview]

John Aldred

John Aldred is a photographer with over 25 years of experience in the portrait and commercial worlds. He is based in Scotland and has been an early adopter – and occasional beta tester – of almost every digital imaging technology in that time. As well as his creative visual work, John uses 3D printing, electronics and programming to create his own photography and filmmaking tools and consults for a number of brands across the industry.

Join the Discussion

DIYP Comment Policy

Be nice, be on-topic, no personal information or flames.

6 responses to “This video explains Google’s Super Resolution algorithm in just three minutes”

Big brother is watching

Only if he has shaky hands

Fairly interesting. The question is: has any of current mobile phones enough computing capability to make it instantly (I meant, within a few seconds), or should we store all the raw data for a prolonged time until final processing?

I wouldn’t think so, at least not for another 5 years. Right of now, all of the processing is done after the fact.

Yes, this technique is being used in pixel phonew.

Pixel 3 maybe not be the most versatile camera system on a smartphone but it’s the most consistent one. No frills, no fiddling, just point and shoot. Best image quality, excellent portrait and low light. I have big expectations for the Pixel 4, I hope Google sticks to a single lens system again.