Adobe Teams up with Google Gemini – Here’s Why That’s Complicated

Adobe announced at Google I/O this week that it is bringing its Adobe for creativity connector to Google Gemini. Once live, Gemini users will be…

Build a High-Res Camera Monitor from a Discarded Chromebook

Bigger, brighter, better color; these hyperbolic-sounding capabilities serve to define the primary benefits that can be derived from adding an external on-camera monitor to your…

AI vs Camera: How Google’s Pomelli Threatens Traditional Product Shoots

Google’s Pomelli Photoshoot feature uses AI to generate studio‑style product images, challenging entry‑level commercial photographers and redefining visual content creation.

Google’s Project Genie Lets You Explore AI Worlds Using Your Images. But Proceed With Caution.

Discover how Google’s Project Genie transforms your photos into interactive AI-generated worlds. Experiment, explore, and remix with caution.

Samsung Brings Google Photos to AI TVs for an Immersive Home Experience

Samsung’s 2026 AI TV lineup integrates Google Photos, letting users explore curated memories, slideshows, and AI-enhanced creative features on the big screen.

Google Nano Banana: AI Turns Selfies into Virtual Try-On Models for Online Shopping

Google’s Nano Banana uses AI to transform a selfie into a full-body digital model, letting you virtually try on clothes from Google Shopping. Explore sizes, outfits, and styles without stepping into a dressing room.

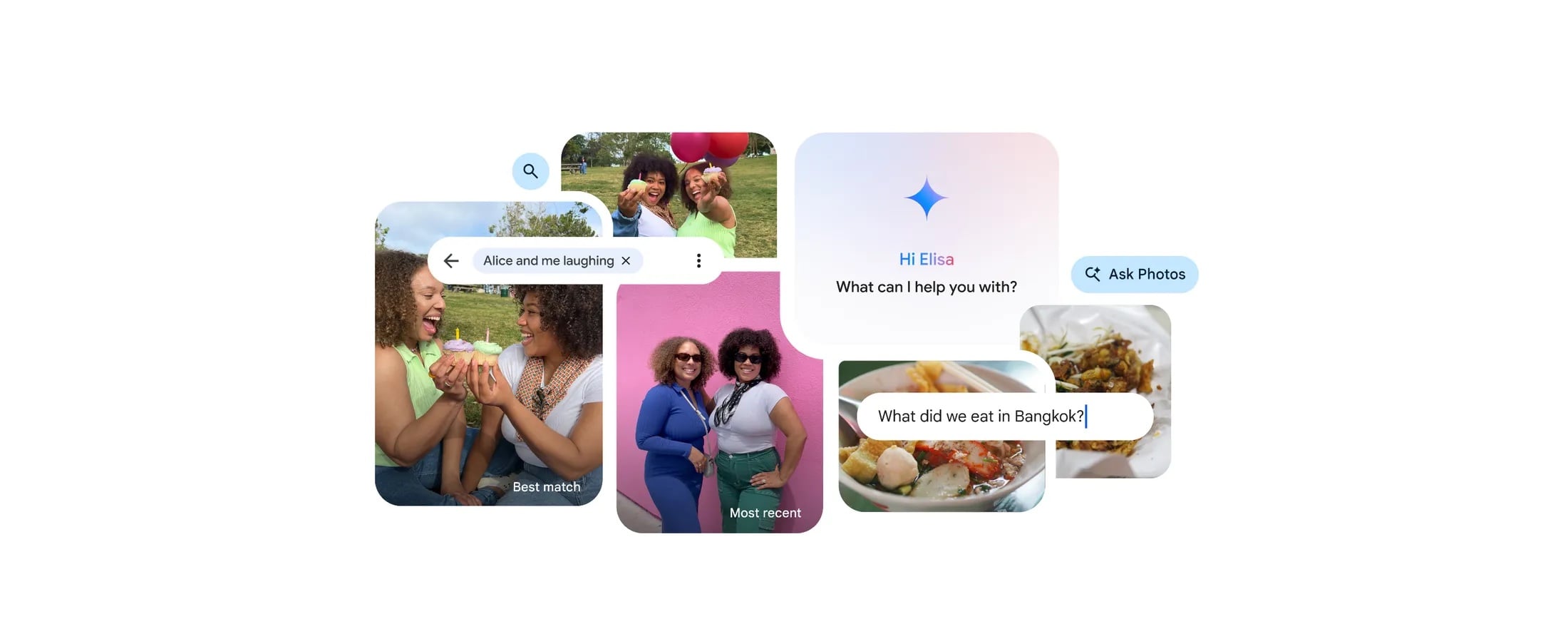

Google Pixel 10 Ask Photos Feature Blocked in Texas and Illinois

Pixel 10 owners in Texas and Illinois cannot access Google’s Ask Photos AI editor due to potential biometric data restrictions. Learn about workarounds and why Gemini AI still works.

Caira Brings Google’s “Nano Banana” Camera Intelligence to Mirrorless Systems

Camera Intelligence (CI) is here for a big change. On October 7, 2025, they introduced Caira. Meet Caira: an AI-driven Micro Four Thirds (M4/3) mirrorless…

Google AI Mode Now “Reads Your Mind” Through Your Images

Google is rolling out a major update to its AI-powered search tool, AI Mode. It introduces a visually rich experience that goes beyond traditional text-based…