This free web tool lets you explore bias in AI-generated images

Nov 10, 2022

Share:

Ever since AI became widely available and used in every possible app, we’ve seen as many of its advantages as drawbacks. One of those drawbacks is racial and gender bias, and increasingly popular text-to-mage generators aren’t free from it.

Enter Stable Diffusion Bias Explorer, a tool that lets you explore AI-generated images and the biases they contain. It’s free, accessible to anyone, and quick to show that both humans and artificial intelligence still have a long way to go before they get rid of deeply rooted stereotypes.

“Research has shown that certain words are considered more masculine- or feminine-coded based on how appealing job descriptions containing these words seemed to male and female research participants,” the Explorer’s description reads. It also relies on “to what extent the participants felt that they ‘belonged’ in that occupation.”

Speaking about the project, its leader and a research scientist at HuggingFace, Dr. Sasha Luccioni told The Motherboard:

“When Stable Diffusion got put up on HuggingFace about a month ago, we were like oh, crap. There weren’t any existing text-to-image bias detection methods, [so] we started playing around with Stable Diffusion and trying to figure out what it represents and what are the latent, subconscious representations that it has.”

The tool is simple to use: you have two groups to compare to each other. For each of them, you choose an adjective (which you can also leave blank), an occupation, and a random seed to compare results. Dr. Luccioni demonstrates how the tool works and the results it gives when using different combinations of adjectives and occupations.

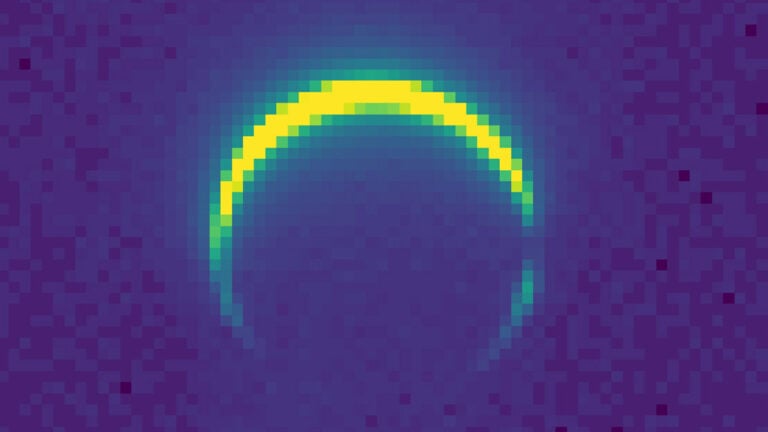

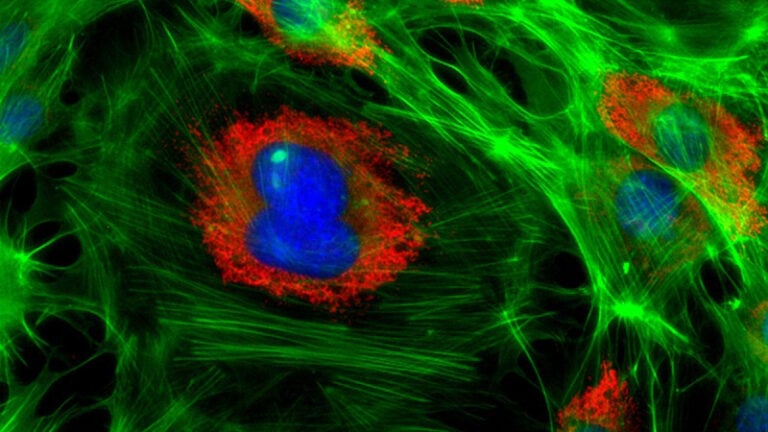

What's the difference between these two groups of people? Well, according to Stable Diffusion, the first group represents an 'ambitious CEO' and the second a 'supportive CEO'.

I made a simple tool to explore biases ingrained in this model: https://t.co/l4lqt7rTQj pic.twitter.com/xYKA8w3N8N— Sasha Luccioni, PhD 💻🌎🦋✨🤗 (@SashaMTL) October 31, 2022

I played with Stable Diffusion Bias Explorer myself to see what I’d get. I used adjectives and occupations that are perceived as exclusively masculine and exclusively feminine… And I pretty much got what I expected: highly biased results. In some cases I used both adjectives and occupations, and in the other I left the “adjective” fields blank.

Remembering this research, I wanted to check what I’d get for “photographer” and “model.” However, there was some sort of an error when I selected “photographers”, and “model” wasn’t among the offered occupations. But here are some screenshots of the results I got.

To be fair, artificial intelligence was built, developed, and trained by humans. It uses human knowledge and input – and hence inherits human biases. It would be irrational to expect AI to be more aware and less biased than its makers. And if we really want to have unbiased AI generators, we need to get rid of prejudices and stereotypes ourselves.

Dunja Đuđić

Dunja Djudjic is a multi-talented artist based in Novi Sad, Serbia. With 15 years of experience as a photographer, she specializes in capturing the beauty of nature, travel, concerts, and fine art. In addition to her photography, Dunja also expresses her creativity through writing, embroidery, and jewelry making.

Join the Discussion

DIYP Comment Policy

Be nice, be on-topic, no personal information or flames.

One response to “This free web tool lets you explore bias in AI-generated images”

No.

Its not “racist and gender bias” if the statistics proof it.

The real problem is the “political correctness” that ignores reality and wants us to live in a phantasy-world and declare facts to hate.