Google rivals Meta by releasing Imagen video, its own text-to-video AI

Oct 7, 2022

Share:

Google has revealed its own text-to-video AI-generated program which is called Imagen video. Similar to Meta’s Make-a-video, the program allows users to generate a short video clip purely by entering descriptive text. It’s very similar to text-to-image apps such as Dall-E and Midjourney, however this time the end product is moving pictures.

Of course, this isn’t the first iteration of text-to-video, and neither was Meta’s for that matter. A few couple of months ago DIYP reported that it would be the next big AI visual progression, and in typical AI nature, that progress has reached us at an insanely rapid rate. But back to Google.

Google had also previously released Imagen as text-to-image software, however, they had decided not to allow it to be used publicly due to what they described as problematic biases that they hadn’t yet managed to surmount. Basically, when scraping the internet for source material, you scrape the dregs of humanity and incorporate systemic racism, gender biases and all that lovely stuff into the AI. Not to mention the potential for misuse and deepfakery.

They are saying the same thing about Imagen Video: “Imagen Video and its frozen T5-XXL text encoder were trained on problematic data. While our internal testing suggests much of explicit and violent content can be filtered out, there still exists social biases and stereotypes which are challenging to detect and filter. We have decided not to release the Imagen Video model or its source code until these concerns are mitigated.”

So don’t expect this to be released as a public beta any time soon. Of course, like with the text-to-image rival apps, such ethical dilemmas won’t deter other similar releases.

Google claims that Imagen Video is a step toward a system with a “high degree of controllability” and world knowledge, including the ability to generate footage in a range of artistic styles.

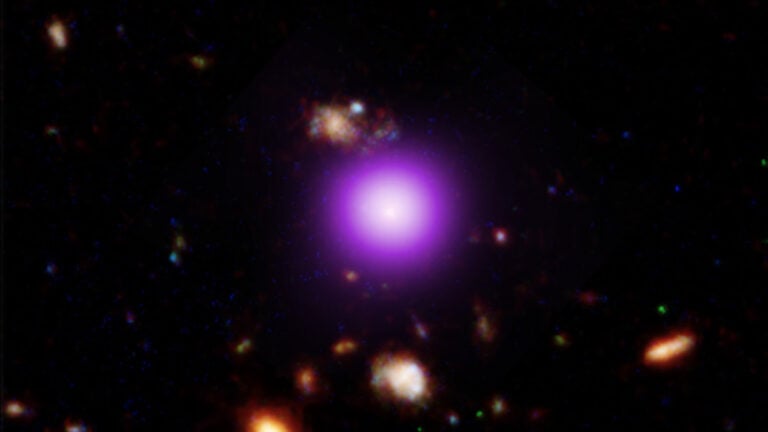

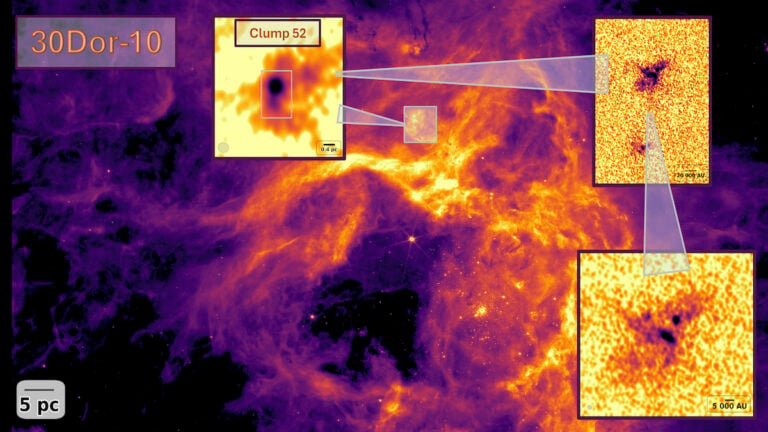

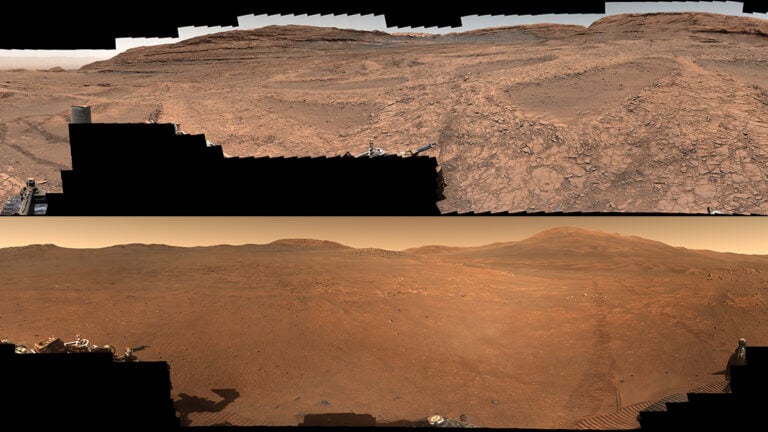

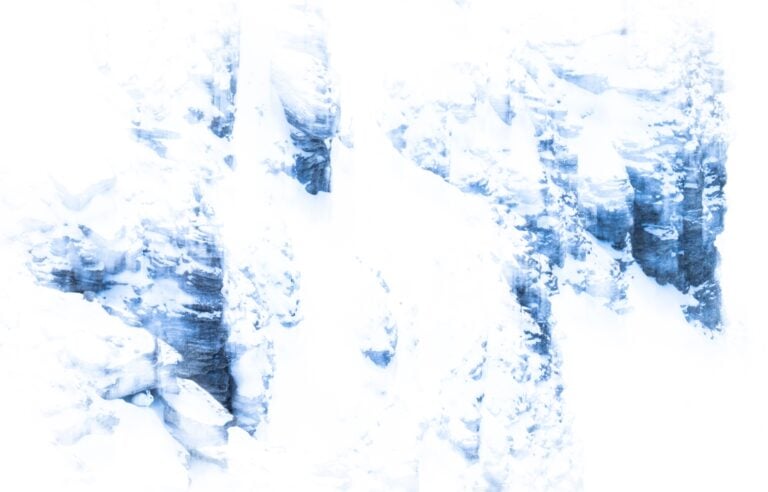

The system takes a text description and generates a 16-frame, three-frames-per-second video at 24-by-48-pixel resolution. Then, the system upscales and “predicts” additional frames, producing a final 128-frame, 24-frames-per-second video at 720p (1280×768).

Google says that Imagen Video was trained on 14 million video-text pairs and 60 million image-text pairs. In experiments, they discovered that Imagen Video could produce videos that replicated certain styles for example Van Gogh’s paintings. It could also handle depth effects to simulate drone-style fly-through videos.

And even more impressive is how the software handled text. It was able to render animated text highly accurately and convincingly.

But the results still are far from perfect. As you can see in the examples, there is still a high degree of noise, artefacts and general oddities. However, with the speed that this tech seems to develop it won’t be that way for long. And yay, now videographers and video editors can be added to the list of creatives fearful of losing their jobs to AI.

Still, at least Google doesn’t seem to have painting teddy bears with creepily human hands. That’s definitely enough to give me nightmares

Alex Baker

Alex Baker is a portrait and lifestyle driven photographer based in Valencia, Spain. She works on a range of projects from commercial to fine art and has had work featured in publications such as The Daily Mail, Conde Nast Traveller and El Mundo, and has exhibited work across Europe

Join the Discussion

DIYP Comment Policy

Be nice, be on-topic, no personal information or flames.

2 responses to “Google rivals Meta by releasing Imagen video, its own text-to-video AI”

At this point of text-to-video technology development, those videos are so freaking bizarre… and I love it :D

At this point of text-to-video technology development, those videos are so freaking bizarre… and I love it :D