Google explains how Pixel phones use AI for astrophotography

Nov 29, 2019

Share:

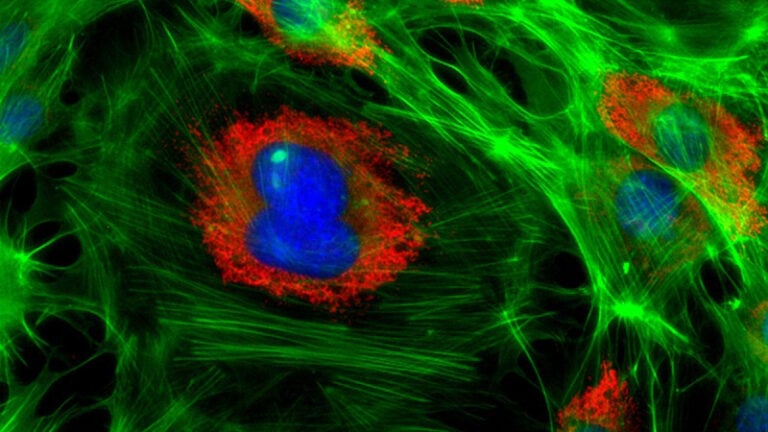

![]()

Ever since the first video and images leaked, we knew that the new Google Pixel 4 was capable of shooting astrophotography, even handheld. If you have wondered how a humble smartphone camera can capture the night sky, Google is now offering an explanation on its blog.

Florian Kainz and Kiran Murthy, software engineers at Google, have shared some details on Google AI Blog. They write that the Night Sight mode was introduced last year in Pixel 3. But in Pixel 4, it has pushed the boundaries in terms of performance.

The engineers have encountered a number of challenges when developing Night Sight. “Taking pictures of real night skies we found that the per-frame exposure time should not exceed 16 seconds,” they write on the blog. Otherwise, the starts would start to look like streaks rather than points of light. The 16-second exposure is a “sweet spot” between capturing enough light, yet making the stars look like dots and not like light trails. But there was one problem that couldn’t be avoided: hot pixels.

With Night Sight, Pixel 4 uses AI to get rid of warm and hot pixels:

“Warm and hot pixels can be identified by comparing the values of neighboring pixels within the same frame and across the sequence of frames recorded for a photo, and looking for outliers. Once an outlier has been detected, it is concealed by replacing its value with the average of its neighbors. Since the original pixel value is discarded, there is a loss of image information, but in practice this does not noticeably affect image quality.”

Another challenge to overcome was that the sky in long exposure photos looks brighter than it really is during the night. So, Pixel 4’s AI determines where the sky is in photos and selectively darkens it to make the photos more realistic. But other than that, sky detection makes it possible to reduce noise by only targeting the sky. It also selectively increases contrast to make clouds, color gradients, or the Milky Way more prominent, the blog reads further.

“With the phone on a tripod, Night Sight produces sharp pictures of star-filled skies,” Google engineers write. “As long as there is at least a small amount of moonlight, landscapes will be clear and colorful.” But even though Pixel 4’s AI is pretty powerful, they admit that it’s not limitless and that there’s always room for improvements.

If you’d like to read more, head over to Google AI Blog for a more detailed explanation and some sample images. Google has even prepared an article with tips and tricks for better night photos, and you can read it here.

[via FStoppers; image credits: FelixMittermeier on Pixabay]

Filed Under:

Tagged With:

Dunja Đuđić

Dunja Djudjic is a multi-talented artist based in Novi Sad, Serbia. With 15 years of experience as a photographer, she specializes in capturing the beauty of nature, travel, concerts, and fine art. In addition to her photography, Dunja also expresses her creativity through writing, embroidery, and jewelry making.

Join the Discussion

DIYP Comment Policy

Be nice, be on-topic, no personal information or flames.