Nvidia

Building a Computer on a Budget for Photography and Video

For creative professionals, a high-performance computer is essential – but it doesn’t need to cost thousands more than it should. With the right balance of…

Nvidia’s streaming software now makes your eyes look at the camera even when they’re not

If you have a hard time focusing on zoom meetings or paying attention to your audience during live streams, then fear no more! Nvidia is…

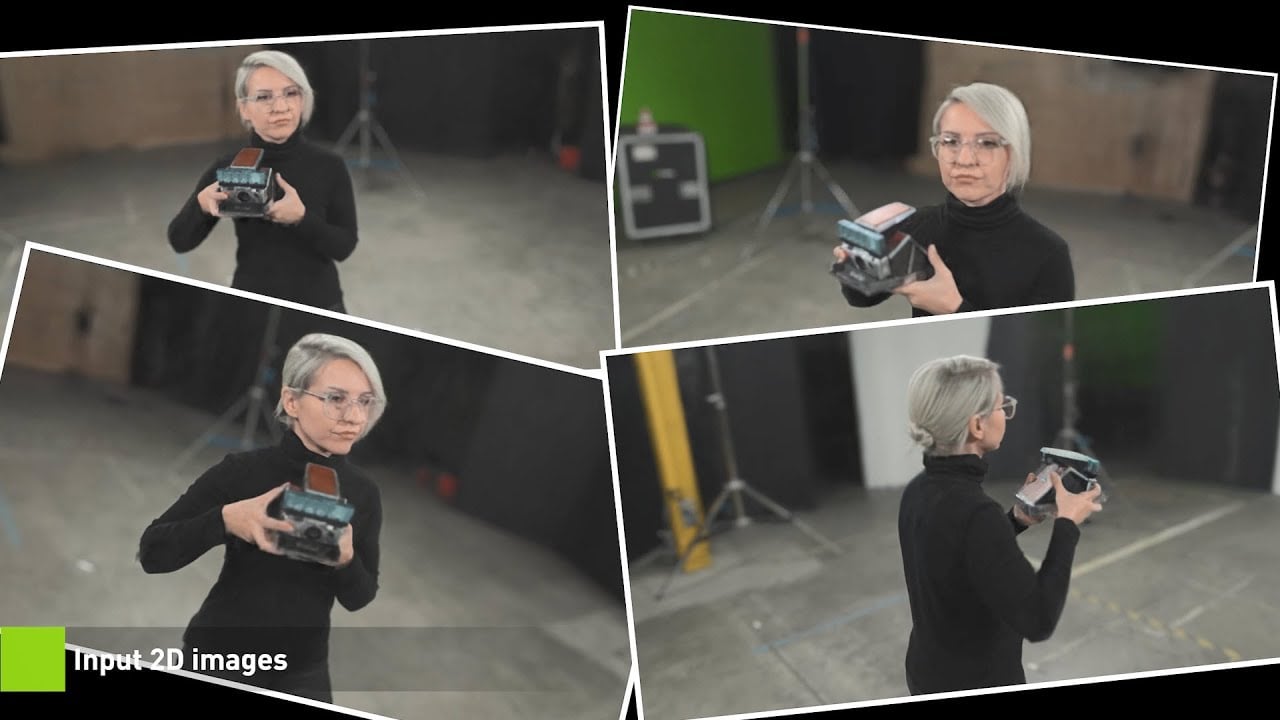

This crazy new NVIDIA tech turns 2D photos into fully 3D scenes in seconds

Creating 3-dimensional worlds and objects from flat 2-dimensional photographs isn’t a new concept. It’s been happening for years. In the early days (and still often,…

New Google Chrome extension helps you spot fake photos with 99.29% accuracy

With the rise in AI-generated imagery lately, creating fake images, even very realistic looking human faces is become more widespread and readily available than ever…

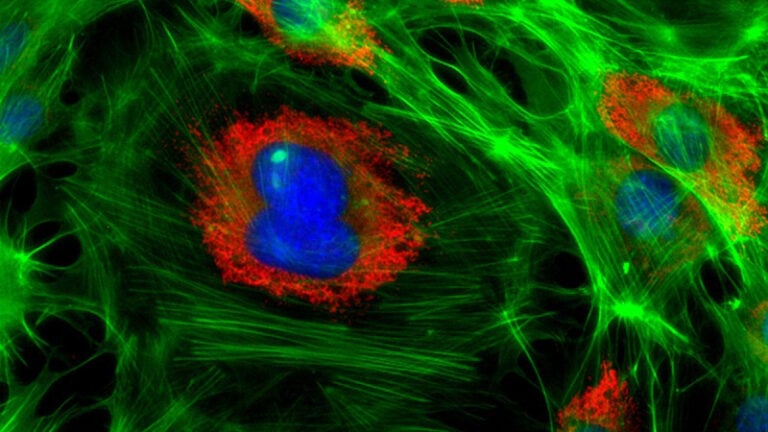

NVIDIA’s GauGAN AI landscape generator can now create scenes from scratch from written descriptions

NVIDIA has been doing a lot of cool stuff with AI. One of those things is GauGAN AI, something of a predecessor to NVIDIA’s Canvas…

We tried recreating landscape photos with AI using NVIDIA Canvas

The whole concept of AI has always been quite fascinating to me. Not so much the whole end of the world Terminator stuff, but how…

This AI-powered app turns your doodles into realistic “photos”

Nvidia has launched a pretty cool AI-powered app that lets you “take photos” even if you’ve never held a camera. Canvas is a free tool…

NVIDIA launches new RTX 3070Ti and RTX 3080Ti graphics cards

Despite the great global graphics cards shortage, NVIDIA has announced not one, but two new graphics cards. The NVIDIA RTX 3070 Ti and RTX 3080…

NVIDIA launches RTX 3050 mobile GPUs for low priced laptops for creatives

NVIDIA has today announced their new hotly-anticipated RTX 3050 and RTX 3050 Ti mobile GPUs for RTX Studio laptops. Designed for both gamers and creatives,…

NVIDIA’s latest RTX Studio drivers boost new AI features from Adobe and Blackmagic

A couple of years ago, graphics card manufacturer NVIDIA decided that it was time to stop focusing just on gamers and launched their “Creator Ready”…