AI Image Tools Are Driving Massive App Downloads, but the Risks Are Growing

May 11, 2026

Share:

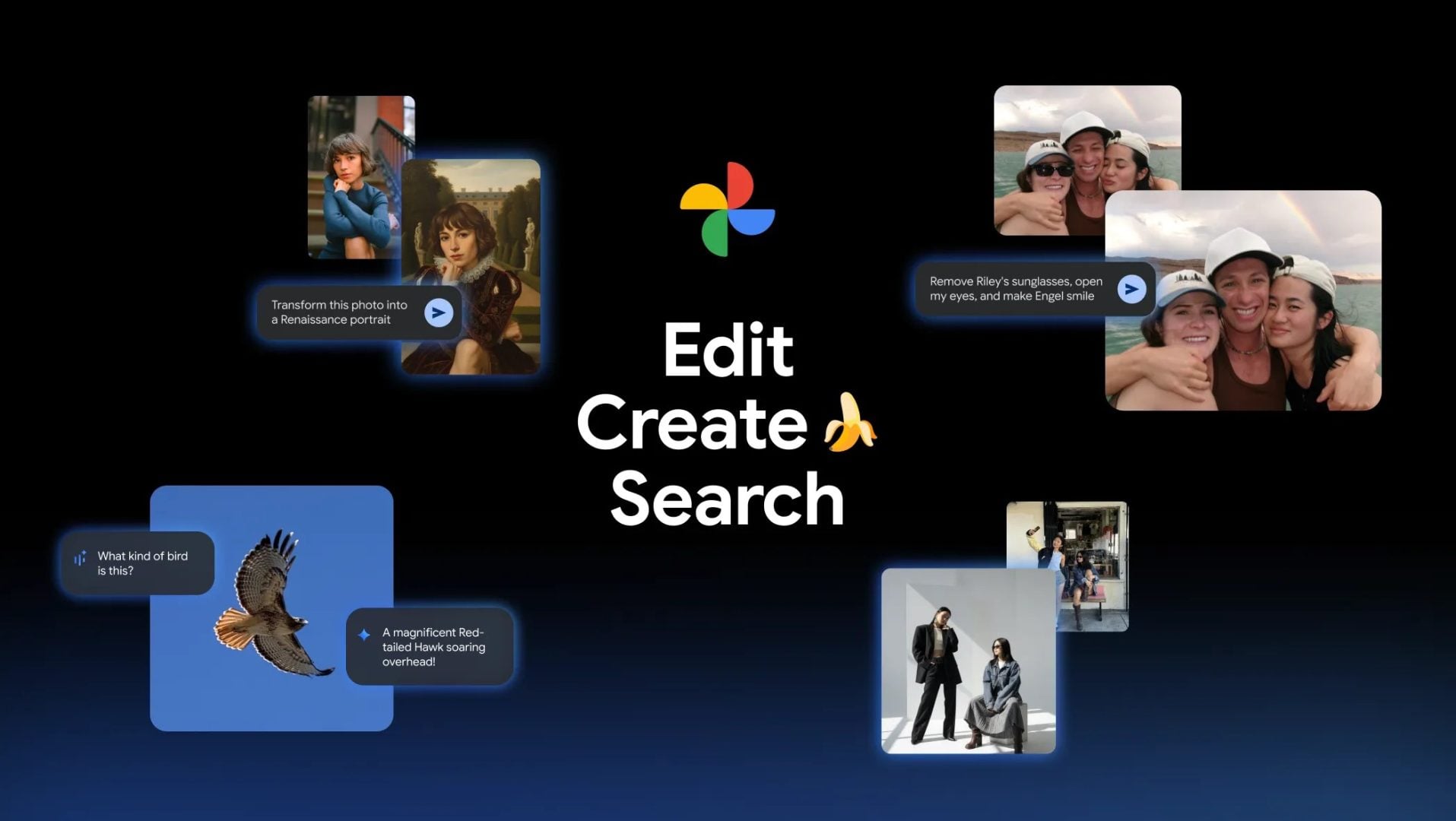

A single image generated in seconds can now do what years of chatbot improvements struggled to achieve: push millions of people to download an app.

That shift is becoming one of the clearest signals in the mobile AI race, where image and video tools are outpacing traditional model upgrades in driving installs and revenue growth, according to a report by Appfigures Intelligence.

The data shows a consistent pattern. ChatGPT added an estimated 12 million incremental downloads in the 28 days following the release of 4o image generation in March 2025. Incremental downloads refers to installs above the app’s existing baseline rather than total downloads.

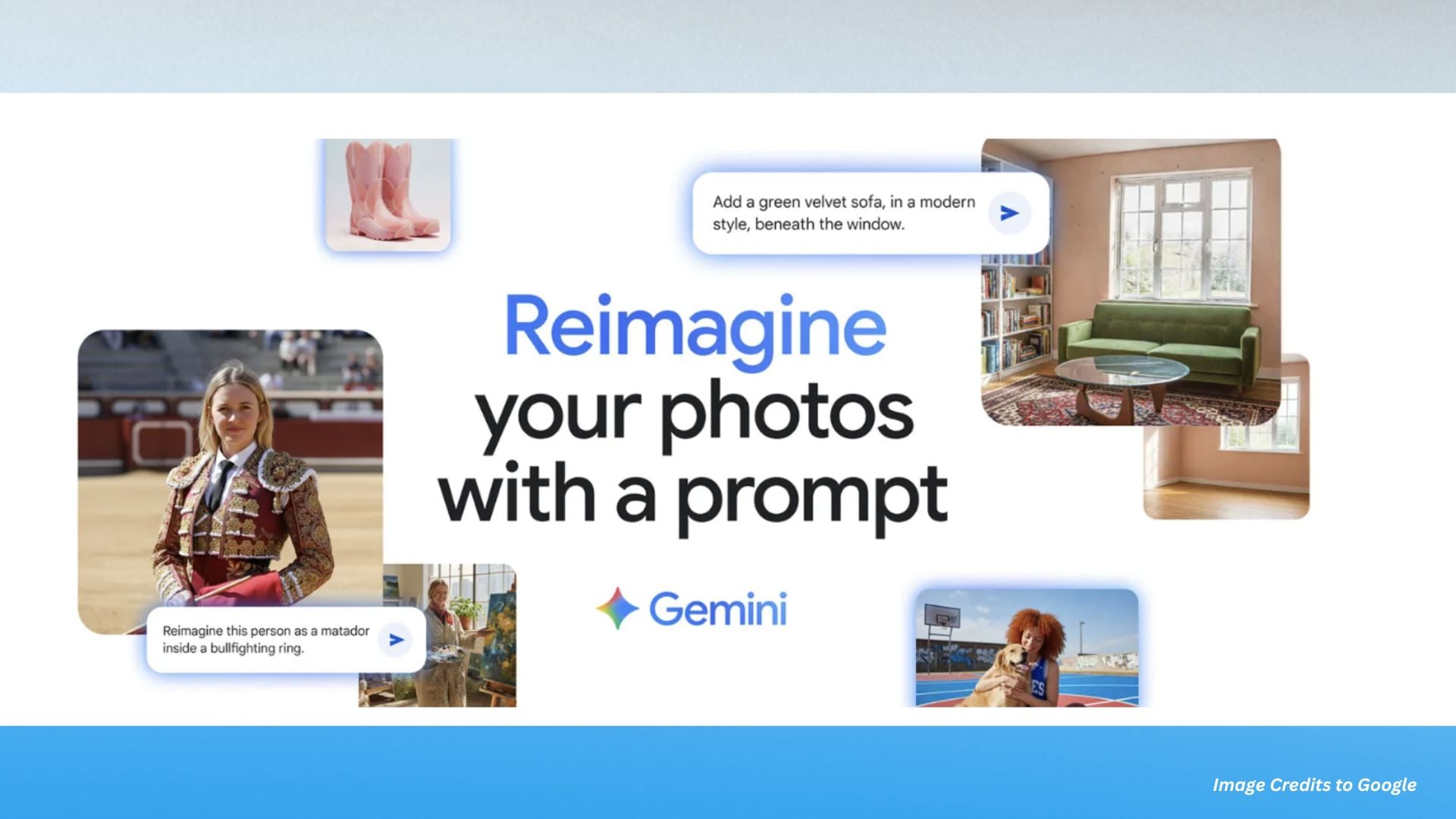

Google Gemini saw an even larger surge. After the introduction of Gemini 2.5 Flash Image, also referred to as Nano Banana, the app gained about 22 million incremental downloads in a similar 28 day period.

Across multiple tracked launches, image and video features drove around 6.5 times more incremental downloads than standard model updates. ChatGPT’s image release alone generated roughly 4.5 times more downloads than its other major model upgrades such as GPT-4o and GPT-4.5 in comparable timeframes.

Why Visual AI Spreads Faster

The pattern points to a simple behavior shift. Users are far more likely to try tools that produce visible results instantly compared to abstract improvements in reasoning or conversation quality.

Visual output changes how AI is shared. A chatbot response tends to stay inside the app, while an AI generated image or short video can be posted, reposted, and circulated across social platforms within seconds.

That shareability is acting as a growth engine. It turns individual curiosity into public exposure, which then drives more installs. In mobile environments where attention is fragmented, this creates a direct path from feature launch to mass adoption.

Meta AI showed a smaller version of the same effect after introducing its AI video feed Vibes. The app recorded around 2.6 million incremental downloads in 28 days, reinforcing the same trend that visual features drive discovery even on smaller platforms.

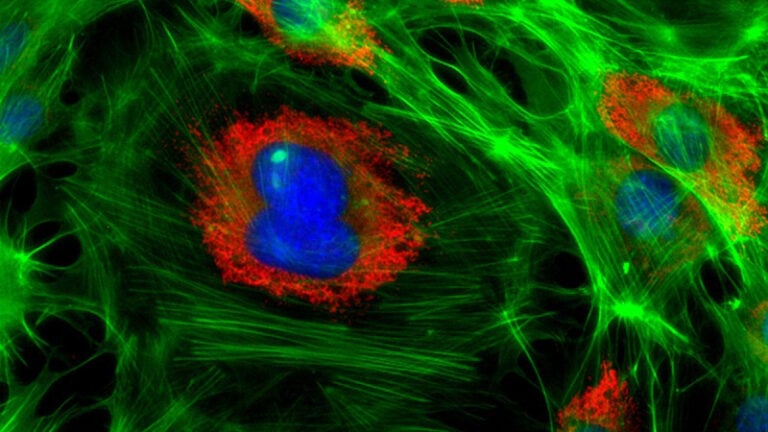

The Dangers Of AI Image Generation

The same accessibility that fuels growth also increases risk. AI image generation tools make it easy to create highly realistic but entirely synthetic visuals in seconds.

This raises concerns around misinformation and manipulated media. Once an image is shared, it can be difficult to verify its origin or determine if it was generated or altered by AI. That uncertainty becomes more significant as quality improves and tools become more widely available.

There is also the issue of scale. Because these tools require no technical expertise, they can be used broadly, making it harder for platforms to monitor misuse in real time.

Regulators are beginning to respond. Some US states have introduced restrictions targeting non consensual AI generated explicit content. South Korea now requires labels for AI-generated content, while the European Union’s AI Act includes transparency requirements for AI.

These policies reflect growing concern over how quickly synthetic media can spread and how difficult it is to control once released online.

AI Detection Tools Have Their Own Problems

One complication in all of this is that the tools designed to identify AI generated images are still highly unreliable. As AI image generation improves, detection systems are struggling to keep pace, creating another layer of confusion around authenticity.

There have been multiple cases where AI detectors incorrectly flagged real images as fake. One example involved authentic photographs from Iran documenting mass graves that were mistakenly labelled as AI generated by detection systems.

The result is an arms race where image generators evolve faster than detection systems can adapt. Some experts now argue that provenance tools, such as camera based authentication and metadata verification, may become more reliable than trying to guess if an image “looks AI.”

As competition intensifies across mobile AI apps, the focus is starting to shift from growth to risk, especially around misuse. If image generation is now the strongest driver of downloads, the harder question is how to prevent the misuse of these tools for impersonation, harassment, and synthetic media abuse once they are widely accessible.

Alysa Gavilan

Alysa Gavilan has spent years exploring photography through photojournalism and street scenes. She enjoys working with both film and mirrorless cameras, and her fascination with the craft has grown over the decades. Inspired by Vivian Maier, she is drawn to capturing everyday moments that often go unnoticed.

Join the Discussion

DIYP Comment Policy

Be nice, be on-topic, no personal information or flames.