ChatGPT now “sees” your photos and talks to you

Sep 26, 2023

Share:

OpenAI has introduced exciting new voice and image features in ChatGPT. The AI tool can now “see” your photos and understand what you’re talking about, which is as impressive as it is scary. These updates are a part of ChatGPT’s “new, more intuitive type of interface,” so let’s dive right in and see what it’s all about.

Talk to ChatGPT and have it talk back

Other than typing in what you need from ChatGPT, you can now have seamless voice interactions with the tool. Not only can you say what’s on your mind, but ChatGPT can talk back. And it’s not just a part of a third-party program anymore.

“The new voice capability is powered by a new text-to-speech model, capable of generating human-like audio from just text and a few seconds of sample speech,” OpenAI explains in the announcement. “We collaborated with professional voice actors to create each of the voices. We also use Whisper, our open-source speech recognition system, to transcribe your spoken words into text.”

To enable voice conversations, go to Settings → New Features on the mobile app and opt-in.

Chat about images with ChatGPT

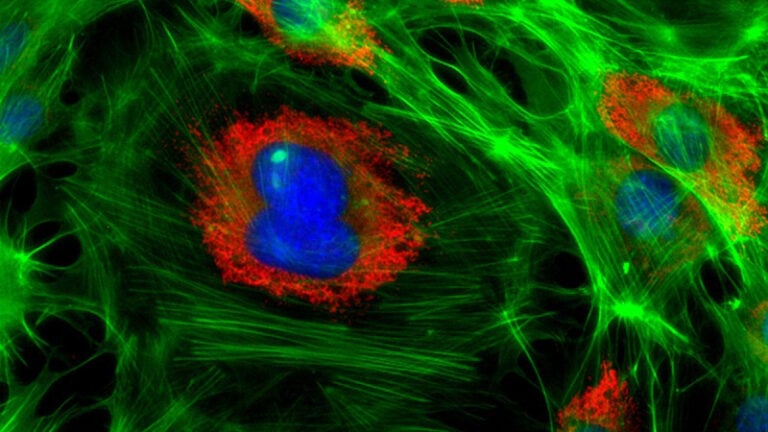

Now for something I personally find even more attractive: ChatGPT now supports image interactions. What does this mean? Share your photos to troubleshoot issues, plan meals, analyze complex graphs for work-related tasks… You name it. It’s even possible to use the drawing tool in the mobile app to highlight specific parts of an image for focused discussions. Where was this when my vintage roller blind got stuck and no YouTube video could help me fix it? :)

Perhaps you remember that this summer ChatGPT got a feature that lets you edit photos and interpret codes. The upcoming image interaction feature raises this to a whole new level and frankly, I can’t wait to try it out.

OpenAI explains that image interactions are powered by multimodal GPT-3.5 and GPT-4. They can interpret different images, including photos, screenshots, and documents, applying advanced language reasoning skills to provide coherent responses.

Safety, responsibility, and limitations

OpenAI emphasizes gradual deployment of both features. As they say, they want to “build AGI that is safe and beneficial.”

“We believe in making our tools available gradually, which allows us to make improvements and refine risk mitigations over time while also preparing everyone for more powerful systems in the future. This strategy becomes even more important with advanced models involving voice and vision.”

OpenAI is still meticulously testing the newly added voice and vision capabilities to ensure user safety, privacy, and responsible usage. While voice technology unlocks potential for diverse applications, it carries some risks, too. It can result in malicious use like impersonation and fraud.

“Vision-based models also present new challenges, ranging from hallucinations about people to relying on the model’s interpretation of images in high-stakes domains,” OpenAI notes.

“Prior to broader deployment, we tested the model with red teamers for risk in domains such as extremism and scientific proficiency, and a diverse set of alpha testers. Our research enabled us to align on a few key details for responsible usage.”

ChatGPT wants to assist users in their daily lives by allowing them to share what they see. They collaborated with apps like Be My Eyes to limit ChatGPT’s analysis of people and respect individual privacy, making the tool more user-friendly and safe.

OpenAI stays open about ChatGPT’s limitations and advises you not to rely on the model for highly specialized topics. It also notes that you shouldn’t take non-English transcription for granted without proper verification.

Availability

OpenAI says it’s rolling out voice and images in ChatGPT to Plus and Enterprise users over the next two weeks. I still haven’t got it on my account so I couldn’t test it out. Some samples and examples are available on OpenAI’s website, so check them out. Voice is coming on iOS and Android (you can opt-in in your settings), and images will be available on all platforms.

Dunja Đuđić

Dunja Djudjic is a multi-talented artist based in Novi Sad, Serbia. With 15 years of experience as a photographer, she specializes in capturing the beauty of nature, travel, concerts, and fine art. In addition to her photography, Dunja also expresses her creativity through writing, embroidery, and jewelry making.

Join the Discussion

DIYP Comment Policy

Be nice, be on-topic, no personal information or flames.