Canon hails groundbreaking 1-megapixel SPAD image sensor as the “eye of the future”

May 31, 2021

Share:

Canon has developed what was everybody thought to be impossible. And while it might not be the highest resolution camera sensor out there, Canon’s new 1-megapixel Single Photon Avalanche Detection (SPAD) sensor offers some huge benefits over more traditional CMOS or CCD sensors. The biggest being that it all but eliminates noise from your images.

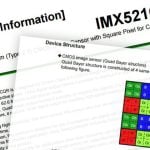

It differs from CMOS sensors in the way that it captures light. Whereas CMOS sensors use electrical signals to monitor the volume of light hitting each pixel in an analogue fashion, which introduces noise, before being converted to digital, SPAD sensors count each and every individual photon that hits it and puts out a true digital signal.

In a technical article published by Canon about the new technology, they highlight some of the applications for the new sensor, including augmented and virtual reality, high frame rate shooting speeds, robot automation, computer vision and driverless vehicles.

SPAD sensors are a type of image sensor. The term “image sensor” probably brings to mind the CMOS sensors found in digital cameras, but SPAD sensors operate on different principles.

Both SPAD and CMOS sensors make use of the fact that light is made up of particles. However, with CMOS sensors, each pixel measures the amount of light that reaches the pixel within a given time, whereas SPAD sensors measure each individual light particle (i.e., photon) that reaches the pixel. Each photon that enters the pixel immediately gets converted into an electric charge, and the electrons that result are eventually multiplied like an avalanche until they form a large signal charge that can be extracted.

The article also speaks of the challenges faced when developing SPAD sensors with high pixel counts. Yes, when it comes to SPAD, 1-megapixel is most definitely considered a high megapixel count and Canon’s new development represents the highest resolution SPAD sensor that exists today.

Until recently, it was considered difficult to create a high-pixel-count SPAD sensor. On each pixel, the sensing site (surface area available for detecting incoming light as signals) was already small. Making the pixels smaller so that more pixels could be incorporated in the image sensor would cause the sensing sites to become even smaller, in turn resulting in very little light entering the sensor, which would also be a big problem.

However, Canon incorporated a proprietary structural design that used technologies cultivated through production of commercial-use CMOS sensors. This design successfully kept the aperture rate at 100% regardless of the pixel size, making it possible to capture all light that entered without any leakage, even if the number of pixels was increased. The result was the achievement of an unprecedented 1,000,000-pixel SPAD sensor.

As well as eliminating virtually all noise from the image the camera sees, the new sensors also offer some incredible readout speeds. Canon equipped the new SPAD sensor with a global shutter capable of shooting up to 24,000 frames per second with 1-bit (black & white) output, with exposure times as fast as 3.8 nanoseconds.

Do you realise how just fast that is? 3.8 nanoseconds is 3.8 billionths of a second. That’s basically a 1/263,157,895th of a second shutter speed. With a global shutter. As Canon points out, the possibilities with such a sensor for scientific monitoring and observation purposes are great, indeed, allowing scientists to see things that were previously impossible.

Given that it’s taken since the 1970s for a company to produce even just a 1-megapixel SPAD sensor, it’s doubtful that we’ll see these coming to cameras for a good while yet – if ever. But if they ever can get this inside the kinds of cameras the likes of us use, oh boy. What a camera that would be!

The whole article is well worth a read if you fancy 5 minutes of geeking out.

[Canon via Canon Watch]

John Aldred

John Aldred is a photographer with over 25 years of experience in the portrait and commercial worlds. He is based in Scotland and has been an early adopter – and occasional beta tester – of almost every digital imaging technology in that time. As well as his creative visual work, John uses 3D printing, electronics and programming to create his own photography and filmmaking tools and consults for a number of brands across the industry.

Join the Discussion

DIYP Comment Policy

Be nice, be on-topic, no personal information or flames.

35 responses to “Canon hails groundbreaking 1-megapixel SPAD image sensor as the “eye of the future””

Square format?

Yes!!!

100% Instagram ready. At least it’s not vertical ;)

I wonder what the file sizes would look like at 20 mp

64bits (uint64_t) per channel per pixel should do it. So about 8x an 8bit image.

It makes absolutely no sense to sample at that bit depth. Most scientific and industrial cameras top out at 14-bits, few reach 16-bits. If you need greater dynamic range, bracket.

Of course bracketing isn’t necessary with this architecture. How long you expose and integrate ultimately dictates the depth.

You’re absolutely right, but if you want to count every single photon losslessly, you need uint64_t and I bet you that’s what the sensor outputs. 32 bit would mean you could count only 4 billion photons and that’s not enough.

That’s completely absurd. Ridiculous overkill. The shortest exposure this imager is capable of is 3.8 nanoseconds. That’s 3.8×10^-9.

A uint64 is 2^64. Multiply the two to calculate how long the total integration time would be to take full advantage of a 64-bit int.

The answer is: 1,871.8157355362305 YEARS of continuous exposure to fully take advantage of the full range of a 64-bit int. Ain’t NO ONE got time for that! And that is assuming you can read out each exposure as fast as you can take them, which you can NOT.

I’ll take that bet! I dug deeper into the operation of this sensor, and it confirms what I said before, and what this article itself clearly states. Each pixel is represented by EXACTLY *ONE* bit. It’s only capable of representing TWO states. A pixel well either saw a photon, or it did not. It is the very definition of black and white, with ZERO shades in between.

Longer exposures do not result in additional accumulation of value. It only increases the likelihood that you’ll capture an event. A one bit deep image is read out, and integrated with previous exposures to build an image of varying bit-depth. The imaginer itself does not do that.

Think of it as how astronomers do lucky imaging. Many, many short exposures stacked, and processed into an image after the fact.

Says who? That really depends on what you’re trying to do with it. Going by the shortest exposure this imager is capable of, 32-bits still represents a 16.32 second exposure. That’s FAR beyond what most regular photographers would do. You’d have to take a 16.32 second exposure just to reach the top of that 32-bit limit.

Now this is being generous, as I haven’t taken the biggest gotcha into consideration yet.

Under normal circumstances, even at the shortest exposure of 3.8 nanoseconds per frame, a collection of frames for 1/125th of a second would detect 65.79 MILLION photons per pixel assuming a high brightness scene like snow, and also assuming you could also read frames out at that same rate.

The article says the framerate is considerably shorter. 24,000 frames a second to be exact. Alas, the slower readout rate integrated over the previous example of 1/125 would yield about 7.25 bits worth of resolution. Not great.

This imager simply isn’t made for conventional photography. It’s special purpose, and intended for scientific and industrial applications where noise rejection, super low light sensitivity, and high speed are critical factors.

TIFF6, PDF (in which TIFF is a native type), and ILBM-IFF (Think mid-90’s Amigas) are the only integer image types I know of that are even claim to support 64-bit depth on paper. While technically supported, the vast majority of libraries I found only cope with 32-bit depth, and 24-bit display.

Professional astronomers use FITS (Flexible Image Transport System), which supports unsigned 8-bit bytes, 16, 32, and 64-bit signed integers, and 32 and 64-bit single or double precision floating point reals, using the ANSI/IEEE-754 standard.

I can find no evidence of anyone using uint64. At any rate, 64-bit depth is an enormous WASTE for this particular sensor.

If individual frames are only 1bit, rather than a photon *count* then yes, you’re right, even with a lot of stacking. OpenCV supports uint64 but you’re also right, I don’t know of many functions that actually support it. Yes, it is absurd overkill if we’re not counting actual photons here one by one.

> you need greater dynamic range, bracket.

Not useful for moving subjects. Exactly what this kind of sensor solves. There’s no dynamic range. The *entire* range is captured. Uint64 is big enough to count every single photon in mostly any situation. 32 bits is not enough.

> How long you expose and integrate ultimately dictates the depth.

That’s not useful from a hardware or software standpoint. Having the sensor output 64-bit unsigned integer the easiest way of doing it. If those integers will fit into 32-bit or less than, by all means, chop them in half after the fact.

I think you grossly misunderstand how this sensor works, and what it’s intended purpose is.

Again, this sensor doesn’t work the way you think it does.

Right. There’s *ONLY* detected photons, or not within a given exposure. It’s BINARY. Full stop.

Sigh, no. Any “range” is the result of how many exposures were captured serially, and what they were processed into.

Uint64 is big enough to count every single photon in mostly any situation.

While technically true, the time it would take, at 24,000 frames per second, to realize the rage a 64-bit int would provide would mean having to collect frames for….

768.6 TRILLION YEARS!!! Or about 55,820 *thousand* times longer than the known universe has been in existence!!! Again, ain’t NO ONE got time for that!

Don’t believe me? Do the stinking MATH!

(2^64) / 24,000fps = 768.6×10^12

Hell, even for a uint32 it would take 49.71 HOURS of continuous integration at 24,000fps to roll over.

LMAO! Buddy, it’s enough for everyone else.

That IS the reality of it, whether you comprehend it or not. Maybe try re-reading this article over and over until it sinks in.

Boy, wouldn’t it!? Too bad it doesn’t work that way at all. Not even remotely close. The fact is, you COMPLETELY do NOT understand what any of this is.

You can’t output 64 bits from a 1 bit input!

Not with that attitude you won’t!!!

/S

Ah nice! thanks for the reply!

Exactly the same as any other sensor at 20 MP… Duh?

This sensor isn’t capturing more data, it’s capturing better data.

Do have actual measured data on big this files at 20m would be? No? Then how do know? It’s a new technology you don’t for a fact what those file sizes are going to look like. Drop the attitude. If you don’t have anything constructive to contribute then don’t say anything at all.

A single 20MP exposure would take up 2.5 megabytes assuming bit-packing.

Thank you for that straight forward reply that answered my question! You enjoy your day!

Huh, it’s almost 2 years since they invented this sensor

No one else going to throw out that light isn’t composed of particles? This is very interesting though. I think the article has done a bad job explaining what’s going on though. It still generates electrons in response to certain radiation frequencies but it does it more accurately. It doesn’t count each photon because… well… haha, you can’t.

No, because those of us who paid attention in school know light behaves like both a wave *AND* a particle, and there’s easily over 100 years of empirical evidence to substantiate that FACT.

Photons *ARE* discreet units of light, whether you “believe” it or not. If you’ve got credible evidence to the contrary, the burden of proof is on YOU.

Hi Glen. I’m really happy you replied. Answer me this, it’s not a riddle, if you have 10 photons and they pass through a polarized light filter that lets 50% through and another light filter that lets 50% through, how many are you left with? What happens to the same 10 photons when you have the same experiment but add a filter that blocks 25% of photons between the two 50% filters? And why? :)

Hello People:

Although I’m not a physicsist, much less a quantum physicist, this is an extremely interesting discussion you have here for me to ignore!

I’ve been trying to study and understand (as well as most successful scientists) all these concepts of light, i.e. electromagnetic “particles” for decades.

That said, we can agree that NOBODY can FULLY understand it yet, much less describe it inside the nuclear /molecular particles realm we live, directly see, and experiment with.

Only throughout quantum physics (quantum electrodynamics) in this case, we can get a very approximated “verdict” about who’s right or wrong on this discussion.

My humble guess is that you are both right and wrong at the same time!

And yes that’s possible. But only inside the quantum physics realm.

Everything that “exists” (including light) is made of quantum “particles” and “sub-quantum particles”. But, at this incredibly small level the simple particle concept (and mechanics) is a very far and sometimes inappropriate approximation.

That’s where you can find the “answer” or “explanation” to your “light filter” experiment.

Because, quantumly speaking, subatomic particles (extremely small pure energy chunks), traveling in vacuum at c speed, not only behaves both like “particles” and “waves”. It also can exist in two different places at the same time.

That’s also “true?“ for the quantum “units” that makes up all matter, antimatter, atoms, electrons, etc.

All this “novel” mind blowing concepts, are currently being studied and experimented with at particle acceleration labs.

The “discovery” of the God’s particle and other mathematical hypothetical “demonstrations” are just an example of how complex a true answer to your question can be.

Yay great comment Jaoquin. Very well put. Now, while I think saying we’re both right is very tactical and generous of you, I think you’re also tapping into something there. There are schools of belief (collapsing wave function or hidden variables theorem) that would say quantum effects exist until classical phenomena occur, then things stop being quantum and become classical. Well, things like the quantum eraser experiment show that this is probably not the case. But that wouldn’t mean classical particles don’t exist – just that their existence is negotiable. It’s all very cool and becoming a necessity for understanding contemporary electronics. The point I’m making is that this article is written quite poorly compared to Canon’s own website discussing the new sensor. We definitely shouldn’t talk about counting photons (or electrons!) as if they are golfballs or something else that can be understood intuitively. That doesn’t make this new technology any less real or exciting; it’s just that an article like this, stressing quantities rather than phenomena as important, could lead to some misunderstandings. Great comment though. Way to bring up the Higgs and everything!

Thanks Conor!

I’m very glad you appreciated my comments. I find yours very interesting, educated and maybe a little harsh to the article’s author. I guess it wasn’t meant for very scientific minds like yours, but for the general public.

And yes, I have a strong tendency (could be a weakness sometimes) to be very diplomatic inside most discussions.

I strongly believe by experience, the more we learn and investigate, the more we realize how little we know about any subject (Einstein once said something like that).

Only God can really know and understand everything.

That last comment might sound very philosophical and religious. Yes, I proudly believe in God, and each day more and more scientists (some former atheists) do.

Back into the camera subject, and bringing it again under the quantum physics /optics Iense, I think you’re right about that photons can’t be a counted like golf balls, much less take one of them out of a bag.

But since they seem to be (or behave like) little chunks of electromagnetic energy, “surfing” on an specific wavelength, maybe we can divide and count each wave or part of it as “one photon”.

And that’s what this new optic device is probably doing.

I was recently diagnosed with some malignant cells inside my body. Thank’s God it was detected on time, and it is at a fairly moderate state.

I chose to get fucused radiotherapy instead of cirugy (will start in a couple weeks) because it offers almost the same successful outcome, with less side effects.

The technology involved in focused radiotherapy have improved a lot over the years.

As the doctor explained they can actually focus an x-ray beam (invisible photons) on the affected area, without touching its surroundings.

As the Dr continued to explain, the photons “knocks out” electrons from the bad cells DNA molecules /atoms, creating free radicals that inhibit its duplication, can’t grow anymore, eventually they die, and Praying to God I get cured ?.

You can imagine, when I heard him talk about “photons” and electrons behaving like ping-pong balls, how everything I’ve learned and read about optics and quantum physics came through my mind.

I hope you don’t mind me publicly sharing something so personal inside this article. It seems to deviate from the subject, but it’s very relevant to me. It’s a lot easier to find that everything is connected when your going through this process.

To conclude, I must say this.

I do believe God can save my life without any human procedure.

But sometimes he send someone to throw you a livesaver and a rope when your drawning.

It’s up to you to grab it or wait for the next big boat.

Well I wish you the fastest and safest possible recovery Jaoquin. I think what fascinates me most is the nature of light and its ability to be a part of and separate from things. I bet there’s some crossover in our spiritualities there. Very best of luck to you and I really hope you’re ok!

Thanks again Conor!

Yes, light is really awesome.

And I guess spirituality somehow have many things in common with light also.

Have a great day!

This is an amusing comments section. Move along. Nothing to see here.

Any camera with this sensor will be my eos R replacement.

1 bit output sounds very noisy.

It’s not at all, because the exposure times are incredibly short. Short enough that thermal noise isn’t an issue.

I understand the concept. The digital signal processing is the same kind of thing Sony’s DSD format uses for audio.

I was more being toungue-in-cheek. Also, if you just looked at the data from a single frame it would be all noise, more or less. So the frame rate is basically a lie when compared to a traditional sensor. To generate a “real” image or video, you need many frames to generate any single frame.

HEY, IF THE SENSOR IS 2 MILLIMETERS BY 2 MILLIMETERS, THEN IT HAS PLENTY OF RESOLUTION!