British police uses AI to detect porn, but it mistakes photos of desert for nudes

Dec 19, 2017

Share:

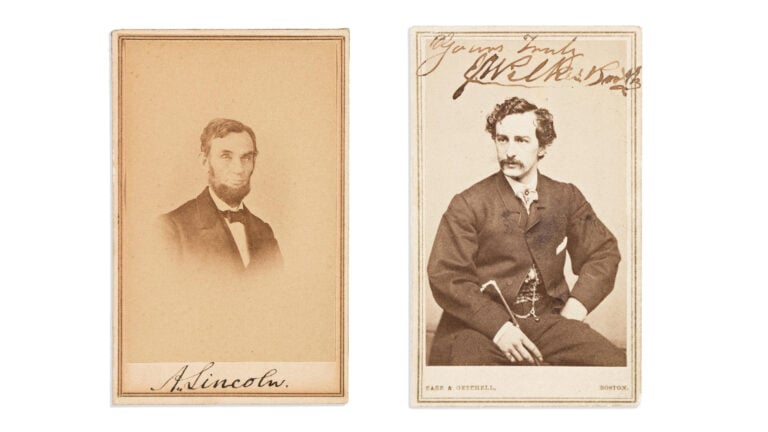

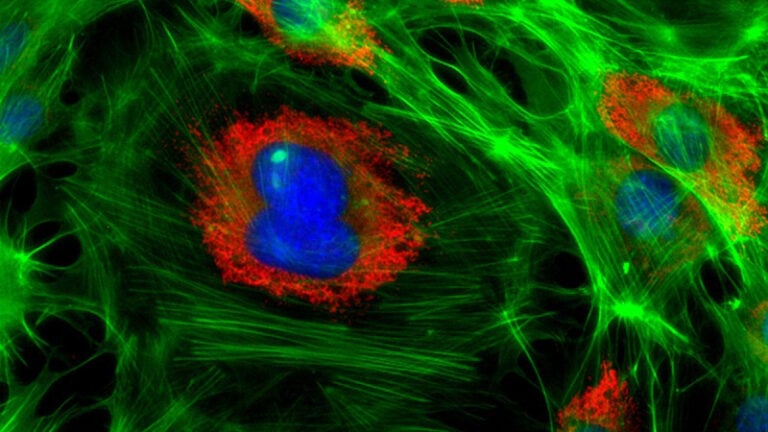

Artificial intelligence is developing fast and has many possible applications. However, it makes mistakes, and this has proven to be a problem for London’s Metropolitan Police. They use AI to detect incriminating images on seized electronic devices. But, it’s unreliable when it comes to nudity, as it still can’t tell the difference between a nude photo and a photo of a desert.

Mark Stokes, the head of digital and electronic forensics, told the Telegraph that their current software detects the photos of guns, drugs, and money on seized computers and phones. However, it keeps mistaking the photos of deserts with pornography or indecent images:

For some reason, lots of people have screen-savers of deserts and it picks it up thinking it is skin colour.

As the Telegraph writes, The Metropolitan Police scanned 53,000 different devices for incriminating evidence last year. As you can imagine, this can be very disturbing for the specialists who spend their career searching for the sensitive and incriminating content. Using artificial intelligence takes this unsettling task away from humans.

Since the incriminating photos often include child abuse, the police force plans to train AI to detect abusive images. There’s apparently still room for improvement, but with the help of Silicon Valley providers, they believe this will be possible “within two to three years.”

Additionally, the police have an ambitious plan to store the flagged images to cloud providers like Amazon, Google, or Microsoft, instead of using their local data center. This puts a lot of pressure on these services, as none of them are completely immune to security breaches, as Gizmodo writes.

I don’t find it unusual that the system mistakes deserts from the human body. Artificial intelligence is prone to mistakes, as the machines can’t understand human nuances. After all, sometimes even humans need to look twice. It made me think of “bodyscape” kind of photos [nsfw link]. Still, with such fast development, I believe AI will become way more accurate in the years to come.

[via Gizmodo, the Telegraph]

Dunja Đuđić

Dunja Djudjic is a multi-talented artist based in Novi Sad, Serbia. With 15 years of experience as a photographer, she specializes in capturing the beauty of nature, travel, concerts, and fine art. In addition to her photography, Dunja also expresses her creativity through writing, embroidery, and jewelry making.

Join the Discussion

DIYP Comment Policy

Be nice, be on-topic, no personal information or flames.

18 responses to “British police uses AI to detect porn, but it mistakes photos of desert for nudes”

that’s about skynet is takin over :-)

Pretty hot.

It’s artificial, but it’s not intelligent.

Facebook’s photo-recognition software does the same thing. I had to argue with them several times about photos that photos of puppets because they claimed it was nudity. The puppet had bare feet. Dah. Not much intelligence there.

British AI, hahaha!!!

Is it British or Iranian?

Taking dunes for nudes, it will be a while before AI takes over…

Daniel Chekalov I am very familiar as I’m working in this area, just have been making a joke / play on words.

Daniel Chekalov You have been presented with something called humor.

that hill will something on top does look like a nipple to be honest…

What if the porn star’s name happens to be Sandy?

Full name Sandy Sahara.

And then they searched for bottoms and found you! HAHA

Well nudes and dunes use the same letters..

Porn is haram. No porn for Britain!

“Are you Sarah Connor?”

“Sir, you’ve been standing in front of this painting since the museum opened, but we’re closing now. Are you alright?”

“I need your boots, your clothes, and this painting.”

SEND DUNES

Jan Erdl